Original Link: https://www.anandtech.com/show/7834/nvidia-geforce-800m-lineup-battery-boost

NVIDIA’s GeForce 800M Lineup for Laptops and Battery Boost

by Jarred Walton on March 12, 2014 12:00 PM EST

Introducing NVIDIA’s GeForce 800M Lineup for Laptops

Last month NVIDIA launched the first of many Maxwell parts to come with the desktop GTX 750 and GTX 750 Ti, which brought a new architecture to NVIDIA’s parts, but one that isn’t radically different from the previous generation’s Kepler. While the features may be largely the same, however, NVIDIA did come out with a renewed focus on efficiency. The result was roughly a doubling of performance per Watt, with the GTX 750 Ti being nearly twice as fast as the GTX 650 with only slightly higher power draw (and some of that most likely comes from the increased load on the rest of the system thanks to the higher frame rates). That renewed focus on efficiency is nice and all on the desktop, but in my opinion where it’s really going to pay dividends is when we get the mobile SKUs.

Today’s launch of the 800M series will give us the first taste of what’s to come, but unfortunately there are two minor issues. One is that we don’t have any 800M hardware in hand for testing (yet – we should get a notebook in the near future); the second problem is that, as is typically the case, 800M will be a mix of both Kepler and Maxwell parts. The Kepler parts aren’t straight recycling of existing SKUs, however, as NVIDIA has a new feature that’s coming out with all ofthe GTX 800M parts: Battery Boost. But before we get into the details of Battery Boost, let’s cover the various parts. Both "regular" (NVIDIA has dropped the "GT" branding of their mainsream parts) and GTX 800M chips are being announced today, though we of course still need these to show up in shipping laptops; we’ll start at the high-end and work our way down.

| NVIDIA GeForce GTX 800M Specifications | |||||

| Product | GTX 880M | GTX 870M | GTX 860M | GTX 860M | GTX 850M |

| Process | 28nm | 28nm | 28nm | 28nm | 28nm |

| Architecture | Kepler | Kepler | Kepler | Maxwell | Maxwell |

| Cores | 1536 | 1344 | 1152 | 640 | 640 |

| GPU Clock | 954 + Boost | 941 + Boost | 797 + Boost | 1029 + Boost | 876 + Boost |

| RAM Clock | 2.5GHz | 2.5GHz | 2.5GHz | 2.5GHz | 2.5GHz |

| RAM Interface | 256-bit | 192-bit | 128-bit | 128-bit | 128-bit |

| RAM Technology | GDDR5 | GDDR5 | GDDR5 | GDDR5 | GDDR5 |

| Maximum RAM | 4GB | 3GB | 2GB | 2GB | 2GB |

| Features |

GPU Boost 2.0 Battery Boost GameStream ShadowPlay Optimus PhysX CUDA SLI GeForce Experience |

GPU Boost 2.0 Battery Boost GameStream ShadowPlay Optimus PhysX CUDA SLI GeForce Experience |

GPU Boost 2.0 Battery Boost GameStream ShadowPlay Optimus PhysX CUDA SLI GeForce Experience |

GPU Boost 2.0 Battery Boost GameStream ShadowPlay Optimus PhysX CUDA SLI GeForce Experience |

GPU Boost 2.0 Battery Boost GameStream ShadowPlay Optimus PhysX CUDA GeForce Experience |

At the top, the GTX 880M carries on from the successful GTX 780M, using a fully enabled GK104 chip with 1536 cores. The difference is that thanks to improvements in yields and other refinements, the GTX 880M will launch with a base clock of 954MHz, which is a pretty significant 20% bump over the 797MHz base clock of the GTX 780M. Otherwise, the only real change will be support for Battery Boost. This is really the only chip where we won’t see any major performance improvement relative to the 700M part – we get a theoretical 20% shader performance increase and that’s about it.

GTX 870M follows a slightly different pattern, using the same GK104 core but with one SMX disabled, leaving us with 1344 cores. Along with the loss of one SMX, the GTX 870M cuts the memory interface down to 192-bits. (Interestingly, this is the same core count as the GTX 775M found in Apple’s iMac only with a 192-bit memory interface, but to my knowledge the GTX 775M never shipped in a notebook.) The previous generation 770M only had 960 cores running at 811MHz + Boost, so overall the 870M should provide a significant boost in performance relative to the previous generation – around 62% more shader processing power and 25% more memory bandwidth as well!

Where things get a little [*cough*] interesting is when we get to the GTX 860M. As we’ve seen in the past, NVIDIA will have two different overlapping models of the 860M available, and they’re really not very similar (though pure performance will probably be pretty close). On the one hand, the Kepler-based 860M will use GK104 with yet another SMX and a memory channel disabled, giving us 1152 cores running at 797MHz + Boost and a 128-bit memory interface. This will probably result in performance relatively close to the previous generation GTX 770M (slightly higher shader performance but slightly less memory bandwidth).

The second GTX 860M will be a completely new Maxwell part, using the same GM107 as the desktop GTX 750/750 Ti with all SMX units active. That gives us 640 cores running at 1029MHz + Boost, which interestingly is faster than the base clock of the desktop GTX 750 Ti (1020MHz). Memory bandwidth doesn’t quite keep up with the desktop card, and of course overclocking is something that will almost certainly result in higher performance potential on desktops, but in general the Maxwell GTX 860M should perform very much like the GTX 750 Ti – and likely at a lower power envelope as well. Even if both GTX 860M parts deliver a similar level of performance, the Maxwell variant should do so while using less power, so that's the one I'd recommend. NVIDIA states that the 860M will be around 40% faster than the GTX 760M.

Finally, the last GTX part being launched today is the GTX 850M. Yes, that’s right: the “x50M” has now been moved from the (now defunct) GT class to the GTX class. This is partly being done in order to make the GTX 850M look better (i.e. marketing), but it’s also being done as a way to segment software feature sets – as we’ll see in a moment, the mainstream 800M GPUs do not support GameStream or ShadowPlay. Also note that the GTX 850M is the only GTX part that does not support SLI, as that feature is only present in the GTX 860M and above. (And while I’m discussing the Features aspect, GFE is my abbreviation for “GeForce Experience”.) There’s a bit more marketing as well, as NVIDIA compares the performance of the new GTX 850M with the previous generation GT 750M in their slides, where perhaps a better comparison would be the GTX 760M with the GTX 765M going up against the GTX 860M. It’s not particularly important in the grand scheme of things, however.

Moving past the naming aspect of the GTX 850M, the specifications are basically the same as the Maxwell GTX 860M, only with a lower core clock of 876MHz. One nice benefit of moving to the GTX class is that the 850M will require the use of GDDR5. With previous generation mobile GPUs, NVIDIA often allowed OEMs to use either GDDR5 or DDR3. While in theory the gaming experience between the who would be “similar”, that really depends on the game and the settings. I know from experience that in some cases a GT 740M GDDR5 can end up performing nearly twice as fast as a GT 740M DDR3 laptop. Basically, DDR3 GPUs really shouldn’t be used in anything with more than a 1366x768 resolution display, and frankly 1366x768 should die a fast death – preferably yesterday, if I had my way. DDR3 will continue to be used in the mainstream 800M GPUs, and it appears GDDR5 is no longer even an option (maybe?); not surprisingly, NVIDIA states that the GTX 850M is on average 70% faster than the 840M. And that brings us to the second tier of mobile 800M GPUs: the "mainstream" class.

| NVIDIA GeForce "Mainstream" 800M Specifications | |||

| Product | 840M | 830M | 820M |

| Process | 28nm | 28nm | 28nm |

| Architecture | Maxwell | Maxwell | Fermi |

| Cores | ? | ? | 96 |

| GPU Clock | ? | ? | 719-954MHz |

| RAM Clock | ? | ? | 2000MHz |

| RAM Interface | 64-bit | 64-bit | 64-bit |

| RAM Technology | DDR3 | DDR3 | DDR3 |

| Maximum RAM | 2GB | 2GB | 2GB |

| Features |

GPU Boost 2.0 Optimus PhysX CUDA GFE |

GPU Boost 2.0 Optimus PhysX CUDA GFE |

GPU Boost 2.0 Optimus PhysX CUDA GFE |

Obviously, NVIDIA is being a little coy with their specifications for these 800M parts. They were good enough to tell us that both the 840M and 830M will use Maxwell-based GPUs, but that’s as far as they would go. I’d guess we’ll see 512 core Maxwell GM107 parts in both of those, although perhaps they might drop another SMX and run with 384 cores on one (or both?) of those; we’ll have to wait and see. The 64-bit memory interface is going to be a pretty severe bottleneck as well, and I’m not sure the new 840M will even be able to consistently outperform the previous generation GT 740M – particularly if the 740M used GDDR5. Actually, scratch that; I’m almost certain a GT 740M GDDR5 solution will be faster than the 840M DDR3, though perhaps not as energy efficient.

And just in case you don’t particularly care for having a modern GPU, Fermi rides again and is available in the 820M. NVIDIA didn’t disclose specs in the launch information, but this part has already been launched so we know what's in here. Of course, this is Fermi in 2014 so really – who cares? 96 cores is at least better than 48 cores (705M), but NVIDIA is at least flirting with iGPU levels of performance with the 820M. In my book, if you don’t need anything more than an 820M, you probably don’t need the 820M!

You can see NVIDIA's images/renders of the various chips in the gallery below:

Wrapping up the specifications overview, NVIDIA was nice and forthcoming with estimates of relative performance. There will likely be exceptions, depending on the game and settings you choose to test, but here’s a nice table summarizing NVIDIA’s estimates:

| NVIDIA's Performance Estimates for the 800M Series | |||

| GPU |

% Increase Over Next GPU |

% of 820M | % of GTX 760M |

| GTX 880M | 20% | 641% | 227% |

| GTX 870M | 35% | 534% | 189% |

| GTX 860M | 15% | 396% | 140% |

| GTX 850M | 70% | 344% | 122% |

| 840M | 35% | 203% | 72% |

| 830M | 50% | 150% | 53% |

| 820M | N/A | 100% | 35% |

This is actually a pretty useful set of estimates (assuming it's correct), as it allows us to immediately see that all of the new GTX GPUs should be quite a bit faster than the GTX 760M, which was a fairly decent mobile GPU. We can also see that the gulf between the mainstream and GTX classes remains quite large. I’m still a bit skeptical of the 840M with DDR3 actually delivering a good performance, as a 64-bit interface is a huge bottleneck. Assuming this is again DDR3-2000 (most GPUs with DDR3 top out at DDR3-2000), we’re talking about a feeble 16GB/s of memory bandwidth – that’s lower than what most desktops and laptops now have for system memory, as DDR3-1600 with a 128-bit interface will do 25.6GB/s. Ouch. Of course it will depend on what settings you want to run at; for me, I don’t mind using medium quality in most games, but the low quality settings can often look quite awful.

New for GTX 800M: Battery Boost

While the core hardware features have not changed in most respects – Maxwell and Kepler are both DX11 parts that implement some but not all of the DX 11.1 features – there is one exception. NVIDIA has apparently modified the hardware in the new GTX 800M chips to support a feature they’re calling Battery Boost. The short summary is that with this new combination of software and hardware features, laptops should be able to provide decent (>30 FPS) gaming performance while delivering 50-100% more battery life during gaming.

This could be really important for laptop gaming, as many people have moved to tablets and smartphones simply because a laptop doesn’t last long enough off AC power to warrant consideration. Battery Boost isn’t going to suddenly solve the problem of a high-end GPU and CPU using a significant amount of power, but instead of one hour (or less) of gaming we could actually be looking at a reasonable 2+ hours. Regardless, NVIDIA is quite excited to see where things go with Battery Boost, and we’ll certainly be testing the first GTX 800M laptops to provide some of our own measurements. Let’s get into some of the details of the implementation.

First, Battery Boost will require the use of NVIDIA’s GeForce Experience (GFE) software. You can see the various settings in the above gallery, though the screenshots are provided by NVIDIA so we have not yet been able to test this. Battery Boost builds on some of the already existing features like game profiles and optimizations, but it adds in some additional tweaks. Each GFE game profile on a laptop with Battery Boost will now have options for plugged in and battery power settings, and along with that setting is the ability to set a target frame rate (with 30 being commonly recommended as a nice balance between smoothness and reducing power use).

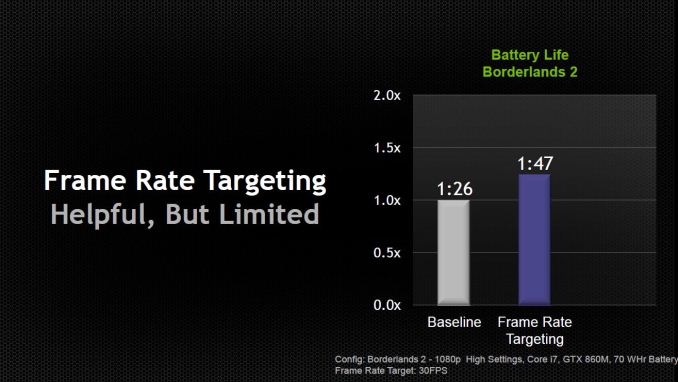

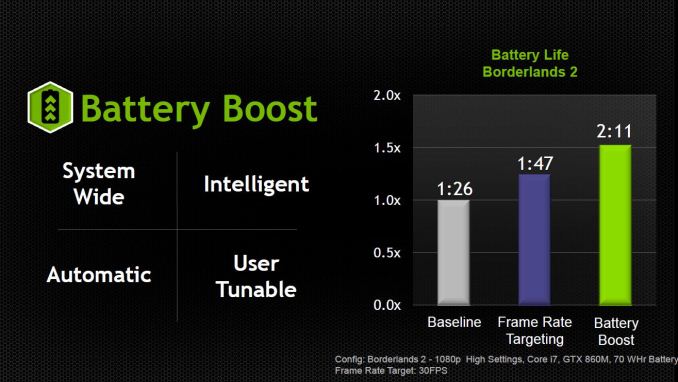

NVIDIA went into quite a bit of detail explaining how Battery Boost is more than simply targeting a lower average frame rate. That’s certainly a large part of the power savings, but it’s more than just capping the frame rate at 30 FPS. NVIDIA provided some information with a test laptop running Borderlands 2 where the baseline measurement was 86 minutes of battery life; turning on frame rate targeting at 30 FPS improved battery life by around 25% to 107 minutes, while Battery Boost is able to further improve on that result by another 22% and deliver 131 minutes of gameplay.

NVIDIA didn’t reveal all the details of what they’re doing, but they did state that Battery Boost absolutely requires a new 800M GPU – that’s it’s not a purely software driven solution. It’s an “intelligent” solution that has the drivers monitoring all aspects of the system – CPU, GPU, RAM, etc. – to reduce power draw and reach maximum efficiency. I suspect some of the “secret sauce” comes by way of capping CPU clocks, since most games generally don’t need the CPU running at maximum Turbo Boost to deliver decent frame rates, but what else might be going on is difficult to say. It also sounds as though Battery Boost requires certain features in the laptop firmware to work, which again would limit the feature to new notebooks.

Besides being system wide and intelligent, NVIDIA has two other “talking points” for Battery Boost. It will be automatic – unplug and the Battery Boost settings are enabled; plug in and you switch back to the AC performance mode. That’s easy enough to understand, but there’s a catch: you can’t have a game running and have it switch settings on-the-fly. That’s not really surprising, considering many games require you to exit and restart if you want to change certain settings. Basically, if you’re going to be playing a game while unplugged and you want the benefits of Battery Boost to be active, you’ll need to unplug before starting the game.

As for being user tunable, the above gallery already more or less covers that point – you can customize the settings for each game within GFE. I did comment to NVIDIA that it would be good to add target frame rate to the list of customization options on a per-game basis, as there are some games where you might want a slightly higher frame rate and others where lower frame rates would be perfectly adequate. NVIDIA indicated this would be something they can add at a later date, but for now the target frame rate is a global setting, so you’ll need to manually change it if you want a higher or lower frame rate for a specific game – and understand of course that higher frame rates will generally increase the load on the GPU and thus reduce battery life.

There’s one other aspect to mobile gaming that’s worth a quick note. Most high-end gaming laptops prior to now have throttled the GPU clocks when unplugged. This wasn’t absolutely necessary but was a conscious design decision. In order to maintain higher clocks, the battery and power circuitry would need to be designed to deliver sufficient power, and this often wasn’t considered practical or important. Even with a 90Wh battery, the combination of a GTX-class GPU and a fast CPU could easily drain the battery in under 30 minutes if allowed to run at full performance. So the electrical design power (EDP) of most gaming notebooks until now has capped GPU performance while unplugged, and even then battery life while gaming has typically been less than an hour. Now with Battery Boost, NVIDIA has been working with the laptop OEMs to ensure that the EDP of the battery subsystem will be capable of meeting the needs of the GPU.

Your personal opinion of Battery Boost and whether or not it’s useful will largely depend on what you do with your laptop. Presumably the main reason for getting a laptop instead of a desktop is the ability to be mobile and move around the house or take your PC with you, and Battery Boost should help improve the mobility aspect for gaming. If you rarely/never game while unplugged, it won’t necessarily help in any way but then it won’t hurt either. I suspect many of us simply don’t bother trying to game while unplugged because it drains the battery so quickly, and potentially doubling your mobile gaming time will certainly help in that respect. It’s a “chicken and egg” scenario, where people don’t game because it’s not viable and there’s not much focus on improving mobile gaming because people don’t play while unplugged. NVIDIA is hoping by taking the first step to improving mobile battery life that they can change what people expect from gaming laptops going forward.

Other Features: GameStream, ShadowPlay, Optimus, etc.

Along with Battery Boost, the GTX class of 800M GPUs will now also support NVIDIA’s GameStream and ShadowPlay technologies, again through NVIDIA’s GeForce Experience software. Unlike Battery Boost, these are almost purely software driven solutions and so they are not strictly limited to 800M hardware. However, the performance requirements are high enough that NVIDIA is limiting their use to GTX GPUs, and all GTX 700M and 800M parts will support the feature, along with the GTX 680M, 675MX, and 670MX. Basically, all GTX Kepler and Maxwell parts will support GameStream and ShadowPlay; the requirement for Kepler/Maxwell incidentally comes because GameStream and ShadowPlay both make use of NVIDIA’s hardware encoding feature.

If you haven’t been following NVIDIA’s software updates, the quick summary is that GameStream allows the streaming of games from your laptop/desktop to an NVIDIA SHIELD device. Not all games are fully supported/optimized, but there are over 50 officially supported games and most Steam games should work via Steam’s Big Picture mode. I haven’t really played with GameStream yet, so I’m not in a position to say much more on the subject right now, but if you don’t mind playing with a gamepad it’s another option for going mobile – within the confines of your home – and can give you much longer unplugged time. GameStream does require a good WiFi connection (at least 300Mbps 5GHz, though you can try it with slower connections I believe), and the list of GameStream-Ready routers can be found online.

On a related note, something I'd really like to see is support for GameStream extended to more than just SHIELD devices. NVIDIA is already able to stream 1080p content in this fashion, and while it might not match the experience of a GTX 880M notebook running natively, it would certainly be a big step up from lower-end GPUs and iGPUs. Considering the majority of work is done on the source side (rendering and encoding a game) and the target device only has to decode a video stream and provide user I/O, it shouldn't be all that difficult. Take it a step further and we could have something akin to the GRID Gaming Beta coupled with a gaming service (Steam, anyone?) and you could potentially get five or six hours of "real" gaming on any supported laptop! Naturally, NVIDIA is in the business of selling GPUs and I don't see them releasing GameStream for non-NVIDIA GPUs (i.e. Intel iGPUs) any time soon, if ever. Still, it's a cool thought and perhaps someone else can accomplish this. (And yes, I know there are already services that are trying to do cloud gaming, but they have various drawbacks; being able to do my own "local cloud gaming" would definitely be cool.)

ShadowPlay targets a slightly different task, namely that of capturing your best gaming moments. When enabled in GFE, at any point in time you can press Alt+F10 to save up to the last 20 minutes (user configurable within GFE) of game play. Manual recording is also supported, with Alt+F9 used to start/stop recording and a duration limited only by the amount of disk space you have available. (Both hotkeys are customizable as well.) The impact on performance with ShadowPlay is typically around 5%, and at most around 10%, with a maximum resolution of up to 1080p (higher resolutions will be automatically scaled down to 1080p).

We’ve mentioned GeForce Experience quite a few times now, and NVIDIA is particularly proud of all the useful features they’ve managed to add to GFE since it first went into open beta at the start of 2013. Initially GFE’s main draw was the ability to apply “optimal” settings to all supported/detected games, but obviously that’s no longer the only reason to use the software. Anyway, I’m not usually much of a fan of “automagic” game settings, but GFE does tend to provide appropriate defaults, and you can always adjust any settings that you don’t agree with. AMD is trying to provide a similar feature via their Raptr gaming service, but by using a GPU farm to automatically test and generate settings for all of their GPUs NVIDIA is definitely ahead for the time being.

NVIDIA being ahead of AMD applies to other areas as well, to varying degrees. Optimus has seen broad support for nearly every laptop equipped with an NVIDIA GPU for a couple years now, and the number of edge cases where Optimus doesn’t work quite as expected is quite small – I can’t remember the last time I had any problems with the feature. Enduro tends to work okay on the latest platforms as well, but honestly I haven’t received a new Enduro-enabled laptop since about a year ago, and there have been plenty of times where Enduro – and AMD’s drivers – have been more than a little frustrating. PhysX and 3D Vision also tend to get used/supported more than the competing solutions, but I’d rate those as being less important in general.

Gaming Notebooks Are Thriving

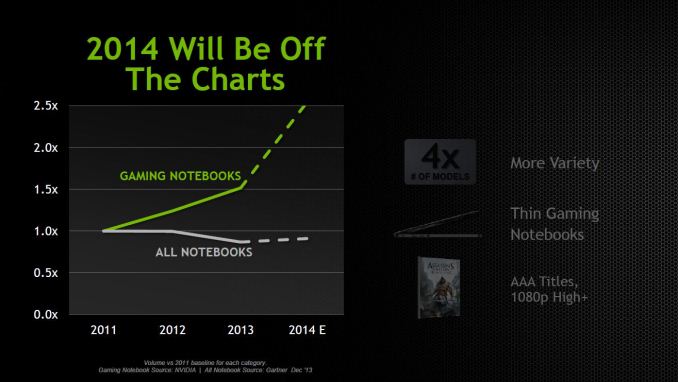

Wrapping things up, I’ll include a gallery of NVIDIA’s slides at the end, but let’s quickly go over a few interesting items. NVIDIA provided some research showing that PC gaming is an extremely large industry – competing in yearly revenue with the likes of movie theater ticket sales, music, and DVD/Blu-ray video sales. Along with that growth, growth in the gaming notebooks market has been significant over the past three years, and even greater growth is expected for 2014. A large part of that is no doubt thanks to Optimus, as it allows potentially any notebook to deliver good gaming performance when you need it while not absolutely killing battery life when you don’t need the GPU. The other aspect is that we are simply seeing more GTX class notebooks shipping, thanks to GPUs like the GTX 760M/765M, and with the 850M now moving into the GTX class (which is where NVIDIA draws the line for “gaming notebooks”) we’ll see even more. But it’s not just about names; the following slide is a great illustration of what we’ve seen since 2011:

It’s not too hard to guess what the notebook on the left is (hello Alienware M17x R3), while on the right it looks like Gigabyte’s P34G. That’s not really important, but the difference in size is pretty incredible, and what’s more, the laptop on the right s actually 30% faster with GTX 850M than the GTX 580M from 2011. It also happens to deliver better battery life – gaming or otherwise. Leaner, lighter, and faster are all good things for gaming notebooks. As you would expect, there are quite a few GTX 800M notebooks coming out soon or in the very near future (while most other 800M parts will mostly come a bit later). NVIDIA provided the following images along with some other information on upcoming laptops, so if you’re in the market keep an eye out for the following (in alphabetical order).

The Alienware 17 will be updated to support both the GTX 870M and GTX 880M. ASUS’ G750JZ will update the G750JH and move from GTX 780M to GTX 880M (and apparently Optimus will be enabled this round). Gigabyte will have new versions of the P34G, the P34R with 860M and the P34J with 850M, an updated P35R (P35K core design) with 860M, and apparently updates to the P25 and P27 as well (likely with mainstream 800M class GPUs, so specifics haven’t been given yet). Lenovo’s Y50 will be their now gaming notebook, with a single GTX 860M and an optional high-DPI display. MSI will also be updating their GT70, GT60, GS70, and GS60; the GT models will support GTX 880M and 870M while the GS models will support the GTX 870M and 860M. And finally (though I suspect we’ll see Clevo, Toshiba, and Samsung announce products with GTX 800M GPUs at some point, along with perhaps some other OEMs as well), Razer Blade will have a new 14” Blade with GTX 870M and a 17” Blade Pro with GTX 860M – and no, that’s not a typo, though perhaps we’ll see more than one model of Blade Pro as it seems odd for the smaller laptop to support a faster GPU.

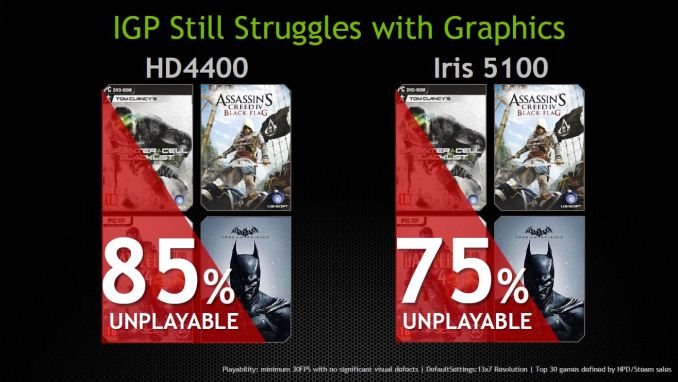

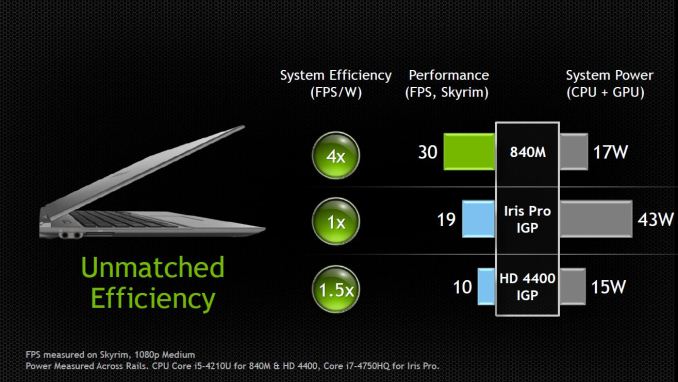

Finally, on the topic of the need for discrete GPUs in laptops, NVIDIA noted that over 85% of the top 30 games (according to NPD/Steam sales) remain unplayable with Intel’s HD 4400 (no surprise, as that’s basically the same performance as HD 4000), while 75% still remain unplayable with Iris 5100 – this is using 1366x768 resolution with “default settings” (presumably medium, but it’s not specifically stated). What’s missing is information on what’s playable with Iris Pro, but while I can say that most games I’ve tested on Iris Pro are able to break 30FPS average frame rates, ironically power use on the i7-4750HQ laptop I’ve tested is actually worse when gaming than most laptops with GT class 700M GPUs. NVIDIA shows this in their results as well, and while I can’t verify the numbers they claim to provide better performance with a 840M than Iris Pro 5200 while using less than half as much power.

Intel has certainly improved their iGPU performance with the last several processor generations, but unfortunately the higher performance has often come only when given more power – so for example a GT2 or GT3 Haswell part limited to 15W total TDP (i.e. in an Ultrabook) is typically no better than a GT2 Ivy Bridge part with a 17W TDP. Broadwell will likely bring us a “GT4” part (to go along with GT3e), but we’ll have to see if Intel is able to improve performance within the same power envelope when those parts start shipping later this year.

Closing Thoughts

Overall, NVIDIA’s mobile GPU solutions continue to be the de facto standard bearer for gaming laptops. AMD’s upcoming Kaveri APUs will almost certainly do well in the budget sector, but users that want more performance – from both the CPU as well as the GPU – will likely continue to go with NVIDIA Optimus solutions, and if you’re the type of gamer that wants to be able to run at least 1080p with high quality settings, you’ll need at least a GTX class GPU to get there. The good news is that you should have plenty of choices in the coming months, and not only are we seeing faster GPUs but many laptops are starting to come out with high quality 3K and 4K displays.

Speaking of which, I also want to note that anyone that thinks “gaming laptops” are a joke either needs to temper their requirements or else give some of the latest offerings a shot. While it’s not possible to simply run all games at 1080p (or QHD+) with maxed out settings without a beefy GPU, even the GT 750M GDDR5 is able to deliver a good gaming experience for most titles at 900p High/1080p Medium settings. The GTX 850M should be quite a bit faster (~60%) than the GT 750M, and we should see it in notebooks that may cost as little as $1000. It’s no surprise then that NVIDIA thinks 2014 gaming notebook sales will be “off the charts”.

As is often the case, we haven’t been sampled any notebooks prior to the launch of the latest 800M series, but we should get some in the near future. We’re looking forward to Maxwell parts in particular, though for now it appears we’ll have to wait a bit for the high-end Maxwell SKUs to arrive (just like on the desktop). It will also be interesting to see how the GTX 860M Kepler and Maxwell variants compare in terms of performance, power, and battery life; I suspect the Maxwell parts will be the ones to get for optimal performance and power requirements, but we shall see.

The latest updates from NVIDIA aren't revolutionary in most areas, but Battery Boost at least could open the doors for more people to consider gaming notebooks. There's always the question of long-term reliability and upgradeability, which are inherently easier to deal with on a desktop, but with a modern laptop I can quite easily connect to an external display, keyboard, mouse, and speakers and never realize that I'm not using a desktop – until I launch a game, at least. What's even better is that when it comes time to take a trip, if all your data already resides on a laptop there's nothing to worry about; you just pack up and leave. That convenience factor alone is enough for many to have made the switch to using a laptop full-time, and I'm not far off from joining them. 2014 may prove to be the year where I finally make the switch.

Last but not least, for those that like the unfiltered NVIDIA slides, you can find those in the gallery below.

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)