Original Link: https://www.anandtech.com/show/2001

Exclusive: ASUS Debuts AGEIA PhysX Hardware

by Derek Wilson on May 5, 2006 3:00 AM EST- Posted in

- GPUs

Introduction

A little over a year ago, we first heard about a company called AGEIA whose goal was to bring high quality physics processing power to the desktop. Today they have succeeded in their mission. For a short while, systems with the PhysX PPU (physics processing unit) have been shipping from Dell, Alienware, and Falcon Northwest. Soon, PhysX add-in cards will be available in retail channels. Today, the very first PhysX accelerated game has been released: Tom Clancy's Ghost Recon Advanced Warfighter, and to top off the excitement, ASUS has given us an exclusive look at their hardware.We have put together a couple benchmarks designed to illustrate the impact of AGEIA's PhysX technology on game performance, and we will certainly comment heavily on our experience while playing the game. The potential benefits have been discussed quite a bit over the past year, but now we finally get a taste of what the first PhysX accelerated games can do.

With NVIDIA and ATI starting to dip their toes into physics acceleration as well (with Havok FX and in-house demos of other technology), knowing the playing field is very important for all parties involved. Many developers and hardware manufacturers will definitely give this technology some time before jumping on the bandwagon, as should be expected. Will our exploration show enough added benefit for PhysX to be worth the investment?

Before we hit the numbers, we want to take another look at the technology behind the hardware.

AGEIA PhysX Technology and GPU Hardware

First off, here is the low down on the hardware as we know it. AGEIA, being the first and only consumer-oriented physics processor designer right now, has not given us as much in-depth technical detail as other hardware designers. We certainly understand the need to protect intellectual property, especially at this stage in the game, but this is what we know.PhysX Hardware:

125 Million transistors

130nm manufacturing process

128MB 733MHz Data Rate GDDR3 RAM

128-bit memory bus interface

20 giga-instructions per second

2 Tb/sec internal memory bandwidth

"Dozens" of fully independent cores

There are quite a few things to note about this architecture. Even without knowing all the ins and outs, it is quite obvious that this chip will be a force to be reckoned with in the physics realm. A graphics card, even with a 512-bit internal bus running at core speed, has less than 350 Gb/sec internal bandwidth. There are also lots of restrictions on the way data moves around in a GPU. For instance, there is no way for a pixel shader to read a value, change it, and write it back to the same spot in local RAM. There are ways to deal with this when tackling physics, but making highly efficient use of nearly 6 times the internal bandwidth for the task at hand is a huge plus. CPUs aren't able to touch this type of internal bandwidth either. (Of course, we're talking about internal theoretical bandwidth, but the best we can do for now is relay what AGEIA has told us.)

Physics, as we noted in last years article, generally presents itself in sets of highly dependant small problems. Graphics has become sets of highly independent mathematically intense problems. It's not that GPUs can't be used to solve these problems where the input to one pixel is the output of another (performing multiple passes and making use of render-to-texture functionality is one obvious solution); it's just that much of the power of a GPU is mostly wasted when attempting to solve this type of problem. Making use of a great deal of independent processing units makes sense as well. In a GPU's SIMD architecture, pixel pipelines execute the same instructions on many different pixels. In physics, it is much more often the case that different things need to be done to every physical object in a scene, and it makes much more sense to attack the problem with a proper solution.

To be fair, NVIDIA and ATI are not arguing that they can compete with the physics processing power AGEIA is able to offer in the PhysX chip. The main selling points of physics on the GPU is that everyone who plays games (and would want a physics card) already has a graphics card. Solutions like Havok FX which use SM3.0 to implement physics calculations on the GPU are good ways to augment existing physics engines. These types of solutions will add a little more punch to what developers can do. This won't create a revolution, but it will get game developers to look harder at physics in the future, and that is a good thing. We have yet to see Havok FX or a competing solution in action, so we can't go into any detail on what to expect. However, it is obvious that a multi-GPU platform will be able to benefit from physics engines that make use of GPUs: there are plenty of cases where games are not able to take 100% advantage of both GPUs. In single GPU cases, there could still be a benefit, but the more graphically intensive a scene, the less room there is for the GPU to worry about anything else. We are certainly seeing titles coming out like Oblivion which are able to bring everything we throw at it to a crawl, so balance will certainly be an issue for Havok FX and similar solutions.

DirectX 10 will absolutely benefit AGEIA, NVIDIA, and ATI. For physics on GPU implementations, DX10 will decrease overhead significantly. State changes will be more efficient, and many more objects will be able to be sent to the GPU for processing every frame. This will obviously make it easier for GPUs to handle doing things other than graphics more efficiently. A little less obviously, PhysX hardware accelerated games will also benefit from a graphics standpoint. With the possibility for games to support orders of magnitude more rigid body objects under PhysX, overhead can become an issue when batching these objects to the GPU for rendering. This is a hard thing for us to test for explicitly, but it is easy to understand why it will be a problem when we have developers already complaining about the overhead issue.

While we know the PhysX part can handle 20 GIPS, this measure is likely simple independent instructions. We would really like to get a better idea of how much actual "work" this part can handle, but for now we'll have to settle for this ambiguous number and some real world performance. Let's take a look a the ASUS card and then take a look at the numbers.

ASUS PhysX Card

It's not as dramatic as a 7900 GTX or an X1900 XTX, but here it is in all its glory. We welcome the new ASUS PhysX card to the fold:

The chip and the RAM are under the heatsink/fan, and there really isn't that much else going on here. The slot cover on the card has AGEIA PhysX written on it, and there's a 4-pin Molex connector on the back of the card for power. We're happy to report that the fan doesn't make much noise and the card doesn't get very warm (especially when compared to GPUs).

We did have an occasional issue when installing the card after the drivers were already installed: after we powered up the system the first time, we couldn't use the AGEIA hardware until we hard powered our system and then booted up again. This didn't happen every time we installed the card, but it did happen more than once. This is probably not a big deal and could easily be an issue with the fact that we are using early software and early hardware. Other than that, everything seemed to work great in the two pieces of software it's currently possible to test.

Our test system is setup similarly to our graphics test systems, with the addition of a low speed CPU. We were curious to find out if the PhysX card helps out slower processors more than fast CPUs, so we set our FX-57 to a 9X multiplier to simulate an Opteron 144. Otherwise, the test bed is the same as we've used for recent GPU reviews:

AMD Athlon 64 FX-57

AMD Opteron 144 (simulated)

ASUS NVIDIA nForce4 SLI X16 Motherboard

2GB OCZ DDR RAM

ATI Radeon X1900 XTX

ASUS PhysX PPU

Windows XP SP2

OCZ PowerStream 600W PSU

Now let's see how the card actually performs in practice.

PhysX Performance

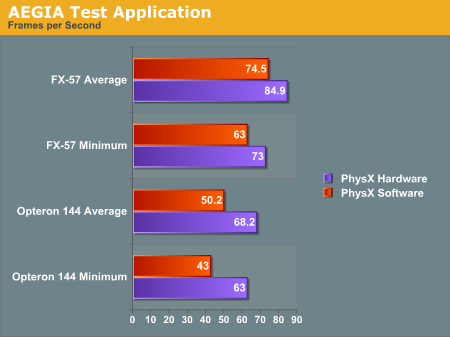

The first program we tested is AGEIA's test application. It's a small scene with a pyramid of boxes stacked up. The only thing it does is shoot a ball at the boxes. We used FRAPS to get the framerate of the test app with and without hardware support.

With the hardware, we were able to get a better minimum and average framerate after shooting the boxes. Obviously this case is a little contrived. The scene is only CPU limited with no fancy graphics going on to clutter up the GPU: just a bunch of solid colored boxes bouncing around after being shaken up a bit. Clearly the PhysX hardware is able to take the burden off the CPU when physics calculations are the only bottleneck in performance. This is to be expected, and doing the same amount of work will give higher performance under PhysX hardware, but we still don't have any idea of how much more the hardware will really allow.

Maybe in the future AGEIA will give us the ability to increase the number of boxes. For now, we get 16% higher minimum frame rates and 14% higher average frame rates by using be AGEIA PhysX card over just the FX-57 CPU. Honestly, that's a little underwhelming, considering that the AGEIA test application ought to be providing more of a best case scenario.

Moving to the slower Opteron 144 processor, the PhysX card does seem to be a bit more helpful. Average frame rates are up 36% and minimum frame rates are up 47%. The problem is, the target audience of the PhysX card is far more likely to have a high-end processor than a low-end "chump" processor -- or at the very least, they would have an overclocked Opteron/Athlon 64.

Let's take a look at Ghost Recon and see if the story changes any.

Ghost Recon Advanced Warfighter

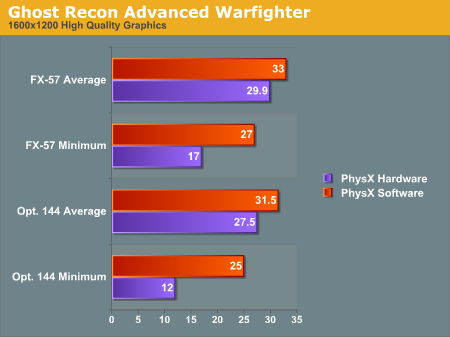

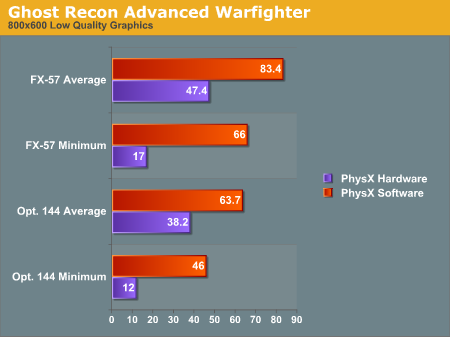

This next test will be a bit different. Rather than testing the same level of physics with hardware and software, we are only able to test the software at a low physics level and the hardware at a high physics level. We haven't been able to find any way to enable hardware quality physics without the board, nor have we discovered how to enable lower quality physics effects with the board installed. These numbers are still useful as they reflect what people will actually see.

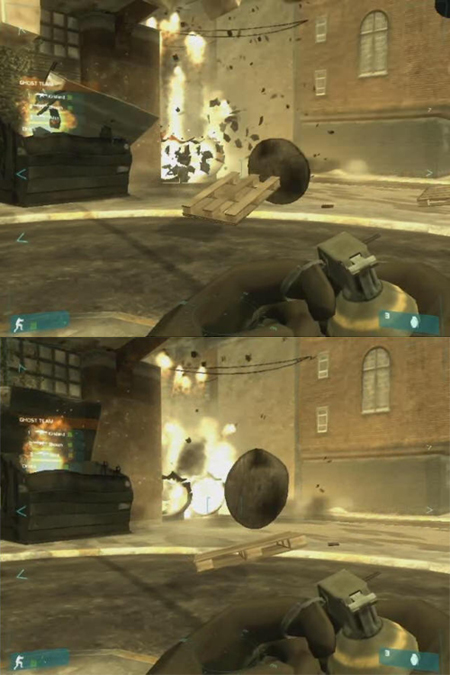

For this test, we looked at a low quality setting (800x600 with low quality textures and no AF) and a high quality setting (1600x1200 with high quality textures and 8x AF). We recorded both the minimum and the average framerate. Here are a couple screenshots with (top) and without (bottom) PhysX, along with the results:

The graphs show some interesting results. We see a lower framerate in all cases when using the PhysX hardware. As we said before, installing the hardware automatically enables higher quality physics. We can't get a good idea of how much better the PhysX hardware would perform than the CPU, but we can see a couple facts very clearly.

Looking at the average framerate comparisons shows us that when the game is GPU limited there is relatively little impact for enabling the higher quality physics. This is the most likely case we'll see in the near term, as the only people buying PhysX hardware initially will probably also be buying high end graphics solutions and pushing them to their limit. The lower end CPU does still have a relatively large impact on minimum frame rates, however, so the PPU doesn't appear to be offloading a lot of work from the CPU core.

The average framerates under low quality graphics settings (i.e. shifting the bottleneck from the GPU to another part of the system) shows that high quality physics has a large impact on performance behind the scenes. The game has either become limited by the PhysX card itself or by the CPU, depending on how much extra physics is going on and where different aspects of the game are being processed. It's very likely this is a more of a bottleneck on the PhysX hardware, as the difference between the 1.8 and 2.6 GHz CPU with PhysX is less than the difference between the two CPUs using software PhysX calculations.

If we shift our focus to the minimum framerates, we notice that when physics is accelerated by hardware our minimum framerate is very low at 17 frames per second regardless of the graphical quality - 12 FPS with the slower CPU. Our test is mostly that of an explosion. We record slightly before and slightly after a grenade blowing up some scenery, and the minimum framerate happens right after the explosion goes off.

Our working theory is that when the explosion starts, the debris that goes flying everywhere needs to be created on the fly. This can either be done on the CPU, on the PhysX card, or in both places depending on exactly how the situation is handled by the software. It seems most likely that the slowdown is the cost of instancing all these objects on the PhysX card and then moving them back and forth over the PCI bus and eventually to the GPU. It would certainly be interesting to see if a faster connection for the PhysX card - like PCIe X1 - could smooth things out, but that will have to wait for a future generation of the hardware most likely.

We don't feel the drop in frame rates really affects playability as it's only a couple frames with lower framerates (and the framerate isn't low enough to really "feel" the stutter). However, we'll leave it to the reader to judge whether the quality gain is worth the performance loss. In order to help in that endeavor, we are providing two short videos (3.3MB Zip) of the benchmark sequence with and without hardware acceleration. Enjoy!

One final note is that judging by the average and minimum frame rates, the quality of the physics calculations running on the CPU is substantially lower than it needs to be, at least with a fast processor. Another way of putting it is that the high quality physics may be a little too high quality right now. The reason we say this is that our frame rates are lower -- both minimum and average rates -- when using the PPU. Ideally, we want better physics quality at equal or higher frame rates. Having more objects on screen at once isn't bad, but we would definitely like to have some control over the amount of additional objects.

Final Words

Ideally, we would have a few more games to test in order to get a better understanding of what developers are doing with the hardware. We'd also love a little more flexibility in how the software we test handles hardware usage and physics detail. For example, what sort of performance can be had using multithreaded physics calculations on dual-core or multi-core systems? Can a high-end CPU even handle the same level of physics detail as with the PhysX card, or has GRAW downgraded the complexity of the software calculations for a reason? It would also be very helpful if we could dig up some low level technical detail on the hardware. Unfortunately, you can't always get what you want.For now, the tests we've run here are quite impressive in terms of visuals, but we can't say for certain whether or not the PPU contributes substantially to the quality. From what GRAW has shown us, and from the list of titles on the horizon, it is clear that developers are taking an interest in this new PPU phenomenon. We are quite happy to see more interactivity and higher levels of realism make their way into games, and we commend AGEIA for their role in speeding up this process.

The added realism and immersion of playing Ghost Recon Advanced Warfighter with hardware physics is a huge success in this gamer's opinion. Granted, the improved visuals aren't the holy grail of game physics, but this is an excellent first step. In a fast fire fight with bullets streaming by, helicopters raining destruction from the heavens, and grenades tearing up the streets, the experience is just that much more hair raising with a PPU plugged in.

If every game out right now supported some type of physics enhancement with a PPU under the hood, it would be easy to recommend it to anyone who wants higher image quality than the most expensive CPU and GPU can currently offer. For now, one or two games aren't going get a recommendation for spending the requisite $300, especially when we don't know the extent of what other developers are doing. For those with money to burn, it's certainly a great part to play with. Whether it actually becomes worth the price of admission will remain to be seen. We are hopefully optimistic having seen these first fruits, especially considering how much more can be done.

Obviously, there's going to be some question of whether or not the PPU will catch on and stay around for the long haul. Luckily, software developers need not worry. AGEIA has worked very hard to do everything right, and we think they're on the right track. Their PhysX SDK is an excellent software physics solution its own right - Sony is shipping it with every PS3 development console, and there are XBox 360 games around with the PhysX SDK powering them as well. Even if the hardware totally fails to gain acceptance, games can still fall back to a software solution. Unfortunately, it's still up to developers to provide the option for modifying physics quality under software as well as hardware, as GRAW demonstrates.

As of now, the PhysX SDK has been adopted by engines such as: UnrealEngine3 (Unreal Tournament 2007), Reality Engine (Cell Factor), and Gamebryo (recently used for Elder Scrolls IV: Oblivion, though Havok is implimented in lieu of PhysX support). This type of developer penetration is good to see, and it will hopefully provide a compelling upgrade argument to consumers in the next 6-12 months.

We are still an incredibly long way off from seeing games that require the PhysX PPU, but it's not outside the realm of possibility. With such easy access to the PhysX SDK for developers, there has got to be some pressure now for those one to two year timeframe products to get in as many beyond-the-cutting-edge features as possible. Personally, I'm hoping the AGEIA PhysX hardware support will make it onto the list. If AGEIA is able to prove their worth on the console middleware side, we may end up seeing a PPU in XBox3 and PS4 down the line as well. There were plenty of skeptics that doubted the PhysX PPU would ever make it out the door, but having passed that milestone, who knows how far they'll go?

We're still a little skeptical about how much the PhysX card is actually doing that couldn't be done on a CPU -- especially a dual core CPU. Hopefully this isn't the first "physics decellerator", rather like the first S3 Virge 3D chip was more of a step sideways for 3D than a true enhancement. The promise of high quality physics acceleration is still there, but we can't say for certain at this point how much faster a PhysX card really makes things - after all, we've only seen one shipping title, and it may simply be a matter of making better optimizations to the PhysX code. With E3 on the horizon and more games coming out "real soon now", rest assured that we will have continuing coverage of AGEIA and the PhysX PPU.