Original Link: https://www.anandtech.com/show/13346/the-nvidia-geforce-rtx-2080-ti-and-2080-founders-edition-review

The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- Raytrace

- GeForce

- GPUs

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

While it was roughly 2 years from Maxwell 2 to Pascal, the journey to Turing has felt much longer despite a similar 2 year gap. There’s some truth to the feeling: looking at the past couple years, there’s been basically every other possible development in the GPU space except next-generation gaming video cards, like Intel’s planned return to discrete graphics, NVIDIA’s Volta, and cryptomining-specific cards. Finally, at Gamescom 2018, NVIDIA announced the GeForce RTX 20 series, built on TSMC’s 12nm “FFN” process and powered by the Turing GPU architecture. Launching today with full general availability is just the GeForce RTX 2080, as the GeForce RTX 2080 Ti was delayed a week to the 27th, while the GeForce RTX 2070 is due in October. So up for review today is the GeForce RTX 2080 Ti and GeForce RTX 2080.

But a standard new generation of gaming GPUs this is not. The “GeForce RTX” brand, ousting the long-lived “GeForce GTX” moniker in favor of their announced “RTX technology” for real time ray tracing, aptly underlines NVIDIA’s new vision for the video card future. Like we saw last Friday, Turing and the GeForce RTX 20 series are designed around a set of specialized low-level hardware features and an intertwined ecosystem of supporting software currently in development. The central goal is a long-held dream of computer graphics researchers and engineers alike – real time ray tracing – and NVIDIA is aiming to bring that to gamers with their new cards, and willing to break some traditions on the way.

| NVIDIA GeForce Specification Comparison | ||||||

| RTX 2080 Ti | RTX 2080 | RTX 2070 | GTX 1080 | |||

| CUDA Cores | 4352 | 2944 | 2304 | 2560 | ||

| Core Clock | 1350MHz | 1515MHz | 1410MHz | 1607MHz | ||

| Boost Clock | 1545MHz FE: 1635MHz |

1710MHz FE: 1800MHz |

1620MHz FE: 1710MHz |

1733MHz | ||

| Memory Clock | 14Gbps GDDR6 | 14Gbps GDDR6 | 14Gbps GDDR6 | 10Gbps GDDR5X | ||

| Memory Bus Width | 352-bit | 256-bit | 256-bit | 256-bit | ||

| VRAM | 11GB | 8GB | 8GB | 8GB | ||

| Single Precision Perf. | 13.4 TFLOPs | 10.1 TFLOPs | 7.5 TFLOPs | 8.9 TFLOPs | ||

| Tensor Perf. (INT4) | 430TOPs | 322TOPs | 238TOPs | N/A | ||

| Ray Perf. | 10 GRays/s | 8 GRays/s | 6 GRays/s | N/A | ||

| "RTX-OPS" | 78T | 60T | 45T | N/A | ||

| TDP | 250W FE: 260W |

215W FE: 225W |

175W FE: 185W |

180W | ||

| GPU | TU102 | TU104 | TU106 | GP104 | ||

| Transistor Count | 18.6B | 13.6B | 10.8B | 7.2B | ||

| Architecture | Turing | Turing | Turing | Pascal | ||

| Manufacturing Process | TSMC 12nm "FFN" | TSMC 12nm "FFN" | TSMC 12nm "FFN" | TSMC 16nm | ||

| Launch Date | 09/27/2018 | 09/20/2018 | 10/2018 | 05/27/2016 | ||

| Launch Price | MSRP: $999 Founders $1199 |

MSRP: $699 Founders $799 |

MSRP: $499 Founders $599 |

MSRP: $599 Founders $699 |

||

As we discussed at the announcement, one of the major breaks is that NVIDIA is introducing GeForce RTX as the full upper tier stack with x80 Ti/x80/x70 stack, where it has previously tended towards the x80/x70 products first, and the x80 Ti as a mid-cycle refresh or competitive response. More intriguingly, each GeForce card has their own distinct GPU (TU102, TU104, and TU106), with direct Quadro and now Tesla variants of TU102 and TU104. While we covered the Turing architecture in the preceding article, the takeaway is that each chip is proportionally cut-down, including the specialized RT Cores and Tensor Cores; with clockspeeds roughly the same as Pascal, architectural changes and efficiency enhancements will be largely responsible for performance gains, along with the greater bandwidth of 14Gbps GDDR6.

And as far as we know, Turing technically did not trickle down from a bigger compute chip a la GP100, though at the architectural level it is strikingly similar to Volta/GV100. Die size brings more color to the story, because with TU106 at 454mm2, the smallest of the bunch is frankly humungous for a FinFET die nominally dedicated for a x70 GeForce product, and comparable in size to the 471mm2 GP102 inside the GTX 1080 Ti and Pascal Titans. Even excluding the cost and size of enabled RT Cores and Tensor Cores, a slab of FinFET silicon that large is unlikely to be packaged and priced like the popular $330 GTX 970 and still provide the margins NVIDIA is pursuing.

These observations are not so much to be pedantic, but more so to sketch out GeForce Turing’s positioning in relation to Pascal. Having separate GPUs for each model is the most expensive approach in terms of research and development, testing, validation, extra needed fab tooling/capacity – the list goes on. And it raises interesting questions on the matter of binning, yields, and salvage parts. Though NVIDIA certainly has the spare funds to go this route, there’s surely a better explanation than Turing being primarily designed for a premium-priced consumer product that cannot command the margins of professional parts. These all point to the known Turing GPUs as oriented for lower-volume, and NVIDIA’s financial quarterly reports indicate that GeForce product volume is a significant factor, not just ASP.

And on that note, the ‘reference’ Founders Edition models are no longer reference; the GeForce RTX 2080 Ti, 2080, and 2070 Founders Editions feature 90MHz factory overclocks and 10W higher TDP, and NVIDIA does not plan to productize a reference card themselves. But arguably the biggest change is the move from blower-style coolers with a radial fan to an open air cooler with dual axial fans. The switch in design improves cooling capacity and lowers noise, but with the drawback that the card can no longer guarantee that it can cool itself. Because the open air design re-circulates the hot air back into the chassis, it is ultimately up to the chassis to properly exhaust the heat. In contrast, a blower pushes all the hot air through the back of the card and directly out of the case, regardless of the chassis airflow or case fans.

All-in-all, NVIDIA is keeping the Founders Edition premium, which is now $200 over the baseline ‘reference.’ Though AIB partner cards are also launching today, in practice the Founders Edition pricing is effectively the retail price until the launch rush has subsided.

The GeForce RTX 20 Series Competition: The GeForce GTX 10 Series

In the end, the preceding GeForce GTX 10 series ended up occupying an odd spot in the competitive landscape. After its arrival in mid-2016, only the lower end of the stack had direct competition, due to AMD’s solely mainstream/entry Polaris-based Radeon RX 400 series. AMD’s RX 500 series refresh in April 2017 didn’t fundamentally change that, and it was only until August 2017 that the higher-end Pascal parts had direct competition with their generational equal in RX Vega. But by that time, the GTX 1080 Ti (not to mention the Pascal Titans) was unchallenged. And all the while, an Ethereum-led resurgence of mining cryptocurrency on video cards was wreaking havoc on GPU pricing and inventory, first on Polaris products, then general mainstream parts, and finally affecting any and all GPUs.

Not that NVIDIA sat on their laurels with Vega, releasing the GTX 1070 Ti anyhow. But what was constant was how the pricing models evolved with the Founders Editions schema, the $1200 Titan X (Pascal), and then $700 GTX 1080 Ti and $1200 Titan Xp. Even the $3000 Titan V maintained gaming cred despite diverging greatly from previous Titan cards as firmly on the professional side of prosumer, basically allowing the product to capture both prosumers and price-no-object enthusiasts. Ultimately, these instances coincided with the rampant cryptomining price inflation and was mostly subsumed by it.

So the higher end of gaming video cards has been Pascal competing with itself and moving up the price brackets. For Turing, the GTX 1080 Ti has become the closest competitor. RX Vega performance hasn’t fundamentally changed, and the fallout appears to have snuffed out any Vega 10 parts, as well as Vega 14nm+ (i.e. 12nm) refreshes. As a competitive response, AMD doesn’t have many cards up their sleeves except the ones already played – game bundles (such as the current “Raise the Game” promotion), FreeSync/FreeSync 2, other hardware (CPU, APU, motherboard) bundles. Other than that, there’s a DXR driver in the works and a machine learning 7nm Vega on the horizon, but not much else is known, such as mobile discrete Vega. For AMD graphics cards on shelves right now, RX Vega is still hampered by high prices and low inventory/selection, remnants of cryptomining.

For the GeForce RTX 2080 Ti and 2080, NVIDIA would like to sell you the RTX cards as your next upgrade regardless of what card you may have now, essentially because no other card can do what Turing’s features enable: real time raytracing effects ((and applied deep learning) in games. And because real time ray tracing offers graphical realism beyond what rasterization can muster, it’s not comparable to an older but still performant card. Unfortunately, none of those games have support for Turing’s features today, and may not for some time. Of course, NVIDIA maintains that the cards will provide expected top-tier performance in traditional gaming. Either way, while Founders Editions are fixed at their premium MSRP, custom cards are unsurprisingly listed at those same Founders Edition price points or higher.

| Fall 2018 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| $1199 | GeForce RTX 2080 Ti | ||||

| $799 | GeForce RTX 2080 | ||||

| $709 | GeForce GTX 1080 Ti | ||||

| Radeon RX Vega 64 | $569 | ||||

| Radeon RX Vega 56 | $489 | GeForce GTX 1080 | |||

| $449 | GeForce GTX 1070 Ti | ||||

| $399 | GeForce GTX 1070 | ||||

| Radeon RX 580 (8GB) | $269/$279 | GeForce GTX 1060 6GB (1280 cores) |

|||

Meet The New Future of Gaming: Different Than The Old One

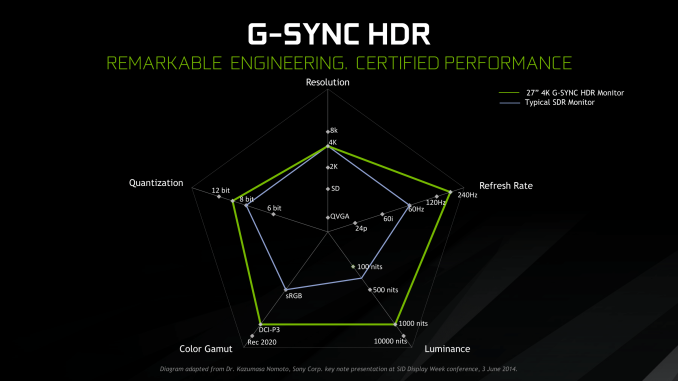

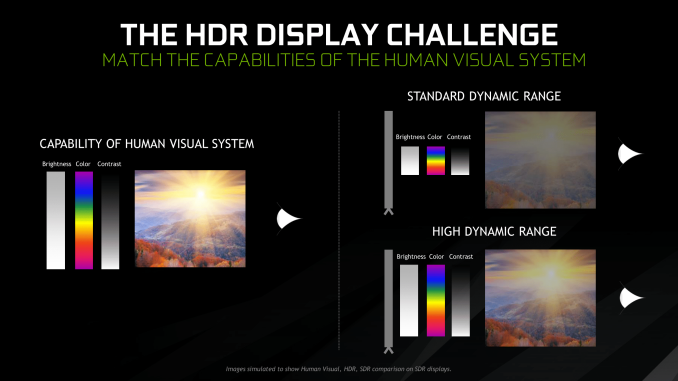

Up until last month, NVIDIA had been pushing a different, more conventional future for gaming and video cards, perhaps best exemplified by their recent launch of 27-in 4K G-Sync HDR monitors, courtesy of Asus and Acer. The specifications and display represented – and still represents – the aspired capabilities of PC gaming graphics: 4K resolution, 144 Hz refresh rate with G-Sync variable refresh, and high-quality HDR. The future was maxing out graphics settings on a game with high visual fidelity, enabling HDR, and rendering at 4K with triple-digit average framerate on a large screen. That target was not achievable by current performance, at least, certainly not by single-GPU cards. In the past, multi-GPU configurations were a stronger option provided that stuttering was not an issue, but recent years have seen both AMD and NVIDIA take a step back from CrossFireX and SLI, respectively.

Particularly with HDR, NVIDIA expressed a qualitative rather than quantitative enhancement in the gaming experience. Faster framerates and higher resolutions were more known quantities, easily demoed and with more intuitive benefits – though in the past there was the perception of 30fps as cinematic, and currently 1080p still remains stubbornly popular – where higher resolution means more possibility for details, higher even framerates meant smoother gameplay and video. Variable refresh rate technology soon followed, resolving the screen-tearing/V-Sync input lag dilemma, though again it took time to catch on to where it is now – nigh mandatory for a higher-end gaming monitor.

For gaming displays, HDR was substantively different than adding graphical details or allowing smoother gameplay and playback, because it meant a new dimension of ‘more possible colors’ and ‘brighter whites and darker blacks’ to gaming. Because HDR capability required support from the entire graphical chain, as well as high-quality HDR monitor and content to fully take advantage, it was harder to showcase. Added to the other aspects of high-end gaming graphics and pending the further development of VR, this was the future on the horizon for GPUs.

But today NVIDIA is switching gears, going to the fundamental way computer graphics are modelled in games today. Of the more realistic rendering processes, light can be emulated as rays that emit from their respective sources, but computing even a subset of the number of rays and their interactions (reflection, refraction, etc.) in a bounded space is so intensive that real time rendering was impossible. But to get the performance needed to render in real time, rasterization essentially boils down 3D objects as 2D representations to simplify the computations, significantly faking the behavior of light.

It’s on real time ray tracing that NVIDIA is staking its claim with GeForce RTX and Turing’s RT Cores. Covered more in-depth in our architecture article, NVIDIA’s real time ray tracing implementation takes all the shortcuts it can get, incorporating select real time ray tracing effects with significant denoising but keeping rasterization for everything else. Unfortunately, this hybrid rendering isn’t orthogonal to the previous concepts. Now, the ultimate experience would be hybrid rendered 4K with HDR support at high, steady, and variable framerates, though GPUs didn’t have enough performance to get to that point under traditional rasterization.

There’s a still a performance cost incurred with real time ray tracing effects, except right now only NVIDIA and developers have a clear idea of what it is. What we can say is that utilizing real time ray tracing effects in games may require sacrificing some or all three of high resolution, ultra high framerates, and HDR. HDR is limited by game support more than anything else. But the first two have arguably minimum performance standards when it comes to modern high-end gaming on PC – anything under 1080p is completely unpalatable, and anything under 30fps or more realistically 45 to 60fps hurts the playability. Variable refresh rate can mitigate the latter and framedrops are temporary, but low resolution is forever.

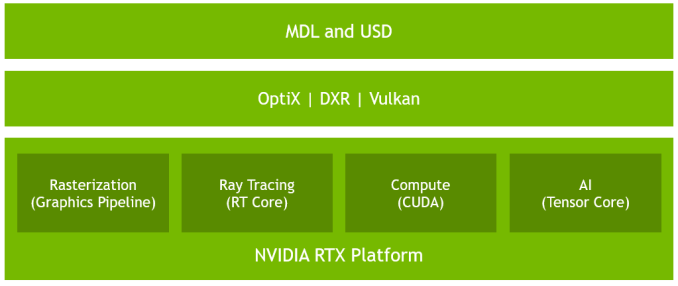

Ultimately, the real time ray tracing support needs to be implemented by developers via a supporting API like DXR – and many have been working hard on doing so – but currently there is no public timeline of application support for real time ray tracing, Tensor Core accelerated AI features, and Turing advanced shading. The list of games with support for Turing features - collectively called the RTX platform - will be available and updated on NVIDIA's site.

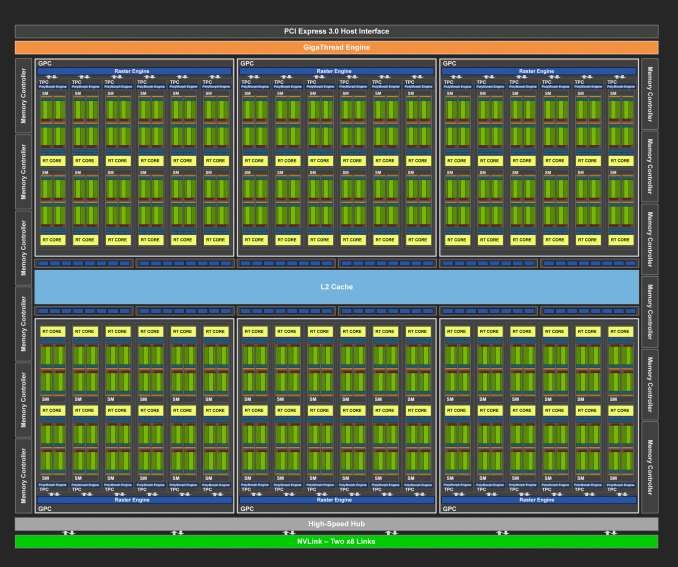

The RTX Recap: A Brief Overview of the Turing RTX Platform

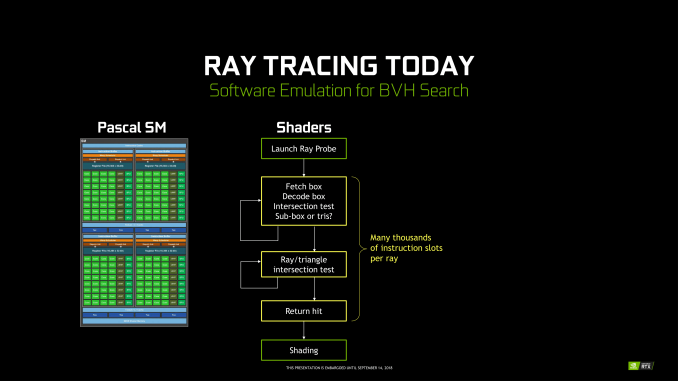

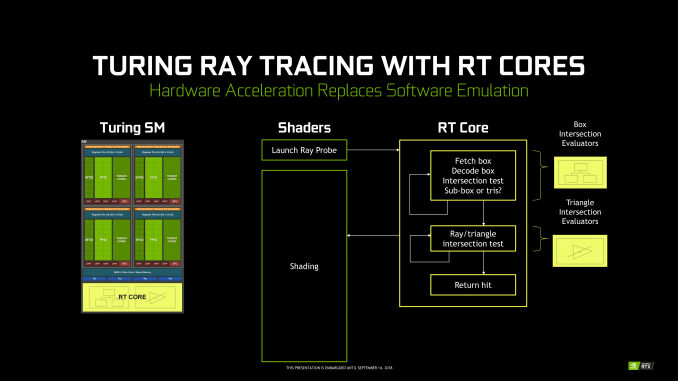

Overall, NVIDIA’s grand vision for real-time, hybridized raytracing graphics means that they needed to make significant architectural investments into future GPUs. The very nature of the operations required for ray tracing means that they don’t map to traditional SIMT execution especially well, and while this doesn’t preclude GPU raytracing via traditional GPU compute, it does end up doing so relatively inefficiently. Which means that of the many architectural changes in Turing, a lot of them have gone into solving the raytracing problem – some of which exclusively so.

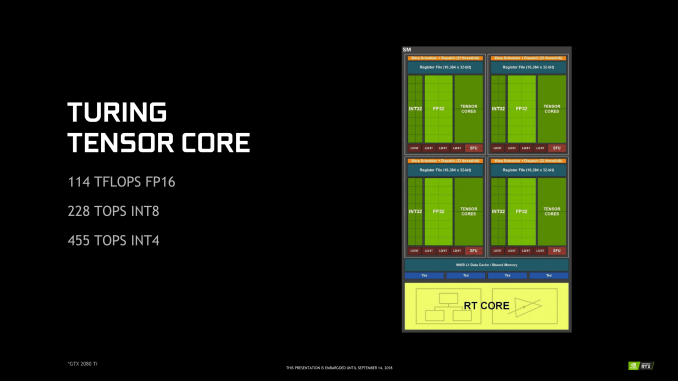

To that end, on the ray tracing front Turing introduces two new kinds of hardware units that were not present on its Pascal predecessor: RT cores and Tensor cores. The former is pretty much exactly what the name says on the tin, with RT cores accelerating the process of tracing rays, and all the new algorithms involved in that. Meanwhile the tensor cores are technically not related to the raytracing process itself, however they play a key part in making raytracing rendering viable, along with powering some other features being rolled out with the GeForce RTX series.

Starting with the RT cores, these are perhaps NVIDIA’s biggest innovation – efficient raytracing is a legitimately hard problem – however for that reason they’re also the piece of the puzzle that NVIDIA likes talking about the least. The company isn’t being entirely mum, thankfully. But we really only have a high level overview of what they do, with the secret sauce being very much secret. How NVIDIA ever solved the coherence problems that dog normal raytracing methods, they aren’t saying.

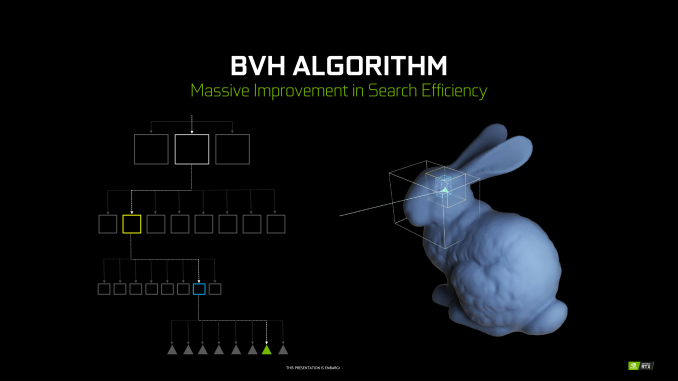

At a high level then, the RT cores can essentially be considered a fixed-function block that is designed specifically to accelerate Bounding Volume Hierarchy (BVH) searches. BVH is a tree-like structure used to store polygon information for raytracing, and it’s used here because it’s an innately efficient means of testing ray intersection. Specifically, by continuously subdividing a scene through ever-smaller bounding boxes, it becomes possible to identify the polygon(s) a ray intersects with in only a fraction of the time it would take to otherwise test all polygons.

NVIDIA’s RT cores then implement a hyper-optimized version of this process. What precisely that entails is NVIDIA’s secret sauce – in particular the how NVIDIA came to determine the best BVH variation for hardware acceleration – but in the end the RT cores are designed very specifically to accelerate this process. The end product is a collection of two distinct hardware blocks that constantly iterate through bounding box or polygon checks respectively to test intersection, to the tune of billions of rays per second and many times that number in individual tests. All told, NVIDIA claims that the fastest Turing parts, based on the TU102 GPU, can handle upwards of 10 billion ray intersections per second (10 GigaRays/second), ten-times what Pascal can do if it follows the same process using its shaders.

NVIDIA has not disclosed the size of an individual RT core, but they’re thought to be rather large. Turing implements just one RT core per SM, which means that even the massive TU102 GPU in the RTX 2080 Ti only has 72 of the units. Furthermore because the RT cores are part of the SM, they’re tightly couple to the SMs in terms of both performance and core counts. As NVIDIA scales down Turing for smaller GPUs by using a smaller number of SMs, the number of RT cores and resulting raytracing performance scale down with it as well. So NVIDIA always maintains the same ratio of SM resources (though chip designs can very elsewhere).

Along with developing a means to more efficiently test ray intersections, the other part of the formula for raytracing success in NVIDIA’s book is to eliminate as much of that work as possible. NVIDIA’s RT cores are comparatively fast, but even so, ray interaction testing is still moderately expensive. As a result, NVIDIA has turned to their tensor cores to carry them the rest of the way, allowing a moderate number of rays to still be sufficient for high-quality images.

In a nutshell, raytracing normally requires casting many rays from each and every pixel in a screen. This is necessary because it takes a large number of rays per pixel to generate the “clean” look of a fully rendered image. Conversely if you test too few rays, you end up with a “noisy” image where there’s significant discontinuity between pixels because there haven’t been enough rays casted to resolve the finer details. But since NVIDIA can’t actually test that many rays in real time, they’re doing the next-best thing and faking it, using neural networks to clean up an image and make it look more detailed than it actually is (or at least, started out at).

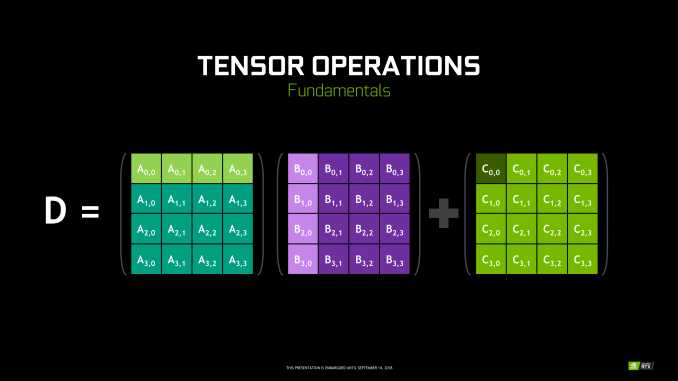

To do this, NVIDIA is tapping their tensor cores. These cores were first introduced in NVIDIA’s server-only Volta architecture, and can be thought of as a CUDA core on steroids. Fundamentally they’re just a much larger collection of ALUs inside a single core, with much of their flexibility stripped away. So instead of getting the highly flexible CUDA core, you end up with a massive matrix multiplication machine that is incredibly optimized for processing thousands of values at once (in what’s called a tensor operation). Turing’s tensor cores, in turn, double down on what Volta started by supporting newer, lower precision methods than the original that in certain cases can deliver even better performance while still offering sufficient accuracy.

As for how this applies to ray tracing, the strength of tensor cores is that tensor operations map extremely well to neural network inferencing. This means that NVIDIA can use the cores to run neural networks which will perform additional rendering tasks. in this case a neural network denoising filter is used to clean up the noisy raytraced image in a fraction of the time (and with a fraction of the resources) it would take to actually test the necessary number of rays.

No Denoising vs. Denoising in Raytracing

The denoising filter itself is essentially an image resizing filter on steroids, and can (usually) produce a similar quality image as brute force ray tracing by algorithmically guessing what details should be present among the noise. However getting it to perform well means that it needs to be trained, and thus it’s not a generic solution. Rather developers need to take part in the process, training a neural network based on high quality fully rendered images from their game.

Overall there are 8 tensor cores in every SM, so like the RT cores, they are tightly coupled with NVIDIA’s individual processor blocks. Furthermore this means tensor performance scales down with smaller GPUs (smaller SM counts) very well. So NVIDIA always has the same ratio of tensor cores to RT cores to handle what the RT cores coarsely spit out.

Deep Learning Super Sampling (DLSS)

Now with all of that said, unlike the RT cores, the tensor cores are not fixed function hardware in a traditional sense. They’re quite rigid in their abilities, but they are programmable none the less. And for their part, NVIDIA wants to see just how many different fields/tasks that they can apply their extensive neural network and AI hardware to.

Games of course don’t fall under the umbrella of traditional neural network tasks, as these networks lean towards consuming and analyzing images rather than creating them. None the less, along with denoising the output of their RT cores, NVIDIA’s other big gaming use case for their tensor cores is what they’re calling Deep Learning Super Sampling (DLSS).

DLSS follows the same principle as denoising – how can post-processing be used to clean up an image – but rather than removing noise, it’s about restoring detail. Specifically, how to approximate the image quality benefits of anti-aliasing – itself a roundabout way of rendering at a higher resolution – without the high cost of actually doing the work. When all goes right, according to NVIDIA the result is an image comparable to an anti-aliased image without the high cost.

Under the hood, the way this works is up to the developers, in part because they’re deciding how much work they want to do with regular rendering versus DLSS upscaling. In the standard mode, DLSS renders at a lower input sample count – typically 2x less but may depend on the game – and then infers a result, which at target resolution is similar quality to a Temporal Anti-Aliasing (TAA) result. A DLSS 2X mode exists, where the input is rendered at the final target resolution and then combined with a larger DLSS network. TAA is arguably not a very high bar to set – it’s also a hack of sorts that seeks to avoid doing real overdrawing in favor of post-processing – however NVIDIA is setting out to resolve some of TAA’s traditional inadequacies with DLSS, particularly blurring.

Now it should be noted that DLSS has to be trained per-game; it isn’t a one-size-fits all solution. This is done in order to apply a unique neutral network that’s appropriate for the game at-hand. In this case the neural networks are trained using 64x SSAA images, giving the networks a very high quality baseline to work against.

None the less, of NVIDIA’s two major gaming use cases for the tensor cores, DLSS is by far the more easily implemented. Developers need only to do some basic work to add NVIDIA’s NGX API calls to a game – essentially adding DLSS as a post-processing stage – and NVIDIA will do the rest as far as neural network training is concerned. So DLSS support will be coming out of the gate very quickly, while raytracing (and especially meaningful raytracing) utilization will take much longer.

In sum, then the upcoming game support aligns with the following table.

| Planned NVIDIA Turing Feature Support for Games | |||||

| Game | Real Time Raytracing | Deep Learning Supersampling (DLSS) | Turing Advanced Shading | ||

| Ark: Survival Evolved | Yes | ||||

| Assetto Corsa Competizione | Yes | ||||

| Atomic Heart | Yes | Yes | |||

| Battlefield V | Yes | ||||

| Control | Yes | ||||

| Dauntless | Yes | ||||

| Darksiders III | Yes | ||||

| Deliver Us The Moon: Fortuna | Yes | ||||

| Enlisted | Yes | ||||

| Fear The Wolves | Yes | ||||

| Final Fantasy XV | Yes | ||||

| Fractured Lands | Yes | ||||

| Hellblade: Senua's Sacrifice | Yes | ||||

| Hitman 2 | Yes | ||||

| In Death | Yes | ||||

| Islands of Nyne | Yes | ||||

| Justice | Yes | Yes | |||

| JX3 | Yes | Yes | |||

| KINETIK | Yes | ||||

| MechWarrior 5: Mercenaries | Yes | Yes | |||

| Metro Exodus | Yes | ||||

| Outpost Zero | Yes | ||||

| Overkill's The Walking Dead | Yes | ||||

| PlayerUnknown Battlegrounds | Yes | ||||

| ProjectDH | Yes | ||||

| Remnant: From the Ashes | Yes | ||||

| SCUM | Yes | ||||

| Serious Sam 4: Planet Badass | Yes | ||||

| Shadow of the Tomb Raider | Yes | ||||

| Stormdivers | Yes | ||||

| The Forge Arena | Yes | ||||

| We Happy Few | Yes | ||||

| Wolfenstein II | Yes | ||||

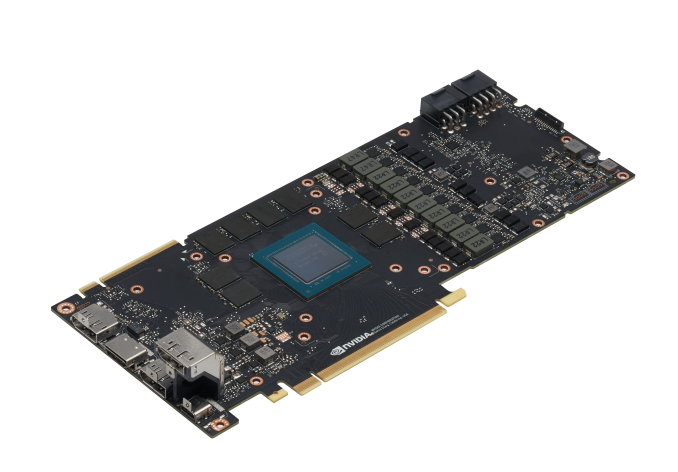

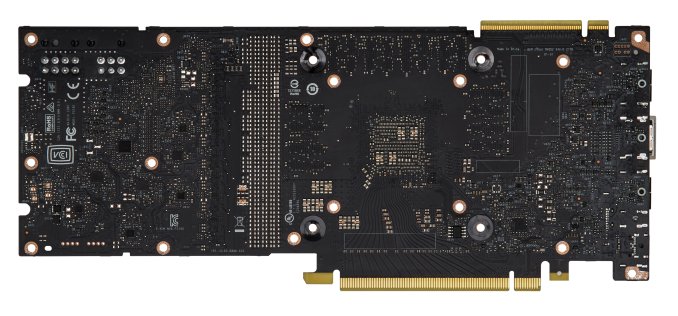

Meet The GeForce RTX 2080 Ti & RTX 2080 Founders Editions Cards

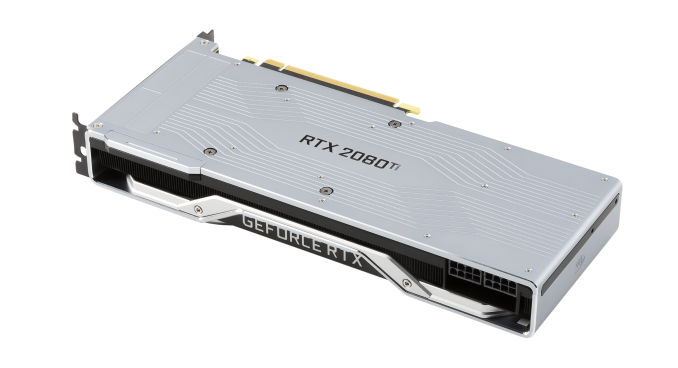

Moving onto the design of the cards, we've already mentioned the biggest change: a new open air cooler design. Along with the Founders Edition specification changes, the cards might be considered 'reference' in that they remain a first-party video card sold direct by NVIDIA, but strictly-speaking they are not because they no longer carry reference specifications.

Otherwise, NVIDIA's industrial design language prevails, and the RTX cards bring a sleek flattened aesthetic over the polygonal shroud of the 10 series. The silver shroud now encapsulates an integrated backplate, and in keeping with the presentation, the NVLink SLI connectors have a removable cover.

Internally, the dual 13-blade fans accompany a full-length vapor chamber and component baseplate, connected to a dual-slot aluminum finstack. Looking at improving efficiency and granular power control, the 260W RTX 2080 Ti Founders Edition features a 13-phase iMON DrMOS power subsystem with a dedicated 3-phase system for the 14 Gbps GDDR6, while the 225W RTX 2080 Founders Edition weighing in with 8-phases main and 2-phases memory.

As is typical with higher quality designs, NVIDIA is pushing overclocking, and for one that means a dual 8-pin PCIe power configuration for the 2080 Ti; on paper, this puts the maximum draw at 375W, though specifications-wise the TDP of the 2080 Ti Founders Edition against the 1080 Ti Founders Edition is only 10W higher. The RTX 2080 Founders Edition has the more drastic jump, however, with 8+6 pins and a 45W increase over the 1080's lone 8 pin and 180W TDP. Ultimately, it's a steady increase from the power-sipping GTX 980's 165W.

One of the more understated changes comes with the display outputs, which thanks to Turing's new display controller now features DisplayPort 1.4 and DSC support, the latter of which is part of the DP1.4 spec. The eye-catching addition is the VR-centric USB-C VirtualLink port, which also carries an associated 30W not included in the overall TDP.

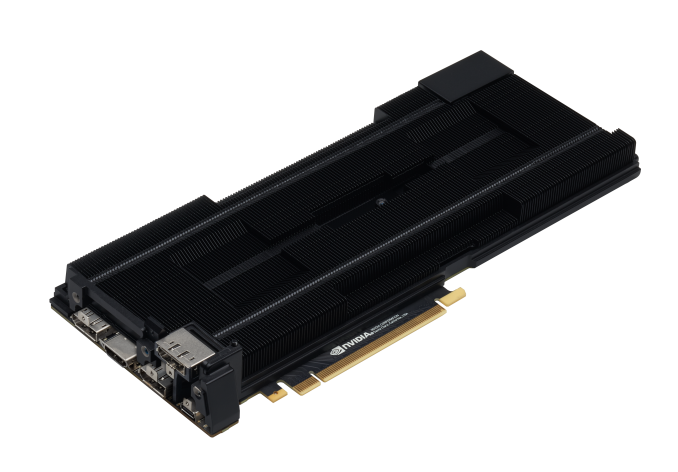

Something to note is that this change in reference design, combined with the seemingly inherent low-volume nature of the Turing GPUs, cuts into an often overlooked but highly important aspect of GPU sales: big OEMs in the desktop and mobile space. Boutique system integrators will happily incorporate the pricier higher-end parts but from the OEM’s perspective, the GeForce RTX cards are not just priced into a new range beyond existing ones but also bringing higher TDPs and no longer equipped with blower-style coolers in its ‘reference’ implementation.

Given that OEMs often rely on the video card being fully self-exhausting because of a blower, it would certainly preclude a lot of drop-in replacements or upgrades – at least not without further testing. It would be hard to slot into the standard OEM product cycle at the necessary prices, not to mention the added difficulty in marketing. In that respect, there is definitely more to the GeForce RTX 20 series story, and it’s somewhat hard to see OEMs offering GeForce RTX cards. Or even the RT Cores themselves existing below the RTX 2070, just on basis of the raw performance needed for real time ray tracing effects at reasonable resolutions and playable framerates. So it will be very interesting to see how the rest of NVIDIA’s product stack unfolds.

The 2018 GPU Benchmark Suite & the Test

Another year marks another update to our GPU benchmark suite. This time, however, is more in line with a maintenance update than it is a complete overhaul. Although we've done some extended compute and deep learning benchmarking in the past year, and even some HDR gaming impressions, our compute and synthetic lineup remains largely the same. But before getting into the details, let's start with the bulk of benchmarking, and the biggest reason for these cards anyhow: games.

Joining the 2018 game list is Far Cry 5, Wolfenstein II, Final Fantasy XV and Middle-earth: Shadow of War. We are also bringing in F1 2018 and Total War: Warhammer II. Returning from last year is Battlefield 1, Ashes of the Singularity: Escalation, and Grand Theft Auto V. All-in-all, these games span multiple genres, differing graphics workloads, and contemporary APIs, with a nod towards modern and relatively intensive games.

| AnandTech GPU Bench 2018 Game List | ||||

| Game | Genre | Release Date | API(s) | |

| Battlefield 1 | FPS | Oct. 2016 | DX11 (DX12) |

|

| Far Cry 5 | FPS | Mar. 2018 | DX11 | |

| Ashes of the Singularity: Escalation | RTS | Mar. 2016 | DX12 (DX11, Vulkan) |

|

| Wolfenstein II: The New Colossus | FPS | Oct. 2017 | Vulkan | |

| Final Fantasy XV: Windows Edition | JRPG | Mar. 2018 | DX11 | |

| Grand Theft Auto V | Action/Open world | Apr. 2015 | DX11 | |

| Middle-earth: Shadow of War | Action/RPG | Sep. 2017 | DX11 | |

| F1 2018 | Racing | Aug. 2018 | DX11 | |

| Total War: Warhammer II | RTS | Sep. 2017 | DX11 (DX12) |

|

That said, Ashes as a DX12 trailblazer may not be as hot and fresh as it once was, especially considering that the pace of DX12 and Vulkan adoption in new games has waned. The circumstances are worth an investigation on their own, but the learning curve required in modern low-level API and the subsequent return may not be convincing right now. As a more general remark, most developers and publishers tend not to advertise or document DX12 support as much as they used to, nor is it clearly labelled in game specifications as many times DX11 is the unmentioned default.

Particularly for NVIDIA and GeForce RTX, pushing DXR and raytracing means pushing DX12, of which DXR is a component. The API has a backstop in the form of Xbox consoles and Windows 10, and if multi-GPU is to make a comeback, whether that's via compatible workloads (VR), flexible usage (ray tracing workload topologies), or just the plain old inevitability of Moore's Law. So this is less likely to be the slow end of DX12.

In terms of data collection, measurements were gathered either using built-in benchmark tools or with AMD's open-source Open Capture and Analytics Tool (OCAT), which is itself powered by Intel's PresentMon. 99th percentiles were obtained or calculated in a similar fashion, as OCAT natively obtains 99th percentiles. In general, we prefer 99th percentiles over minimums, as they more accurately represent the gaming experience and filter out any artificial outliers.

We've also swapped out Blenchmark, which seems to have been abandoned in terms of updates, in favor of a BMW render from the Blender Institute Cycles Benchmark, and a more recent one from a Cycles benchmark developer on Blenderartists.org. There were concerns with Blenchmark's small tile size, which is not very applicable to GPUs, and in terms of usability we also ran into some GPU detection errors which were linked to inaccurate Blenchmark Python code.

Otherwise, we are also keeping an eye on a few trends and upcoming developments:

- MLPerf machine learning benchmark suite

- Blender Benchmark

- Futuremark's 3DMark DirectX Raytracing benchmark

- DXR and Vulkan raytracing extension support in games

Another point is that we do not have a permanent HDR monitor for our testbed, which would be necessary to incorporate HDR game testing in the near future; 5 games in our list actually support HDR. And as we look at technologies that enhance or alter image quality (e.g. HDR, Turing's DLSS), we will want to find a better way of comparing differences. This is particularly tricky with HDR as screenshots are inapplicable and even taking accurate photographs will most likely be viewed on an SDR screen. With DLSS, there is a built-in reference quality based on 64x supersampling, which in deep learning terms is the 'ground truth'; an intuitive solution would be to use a neural network based method of analyzing quality differences, but that is likely beyond our scope.

The following tech demos and test applications were provided via NVIDIA:

- Star Wars 'Reflections' Demo (includes real time ray tracing and DLSS support)

- Final Fantasy XV Official Benchmark (includes DLSS support)

- Asteroids Demo (features mesh shading and variable LOD)

- Epic Infiltrator Demo (features DLSS)

The Testbed

Because NVIDIA is not productizing any other reference-quality GeForce RTX 2080 Ti and 2080 card besides the Founders Editions, which are non-reference by specifications, we've gone ahead and emulated the true reference specifications with a 90MHz downclock and lowering the TDP by roughly 10W. This is to keep comparisons standardized and apples-to-apples, as we always look at reference-to-reference results.

In a classic case of Murphy's Law, our usual PSU started malfunctioning around the time of the review, but given the time constraints we couldn't do a 1:1 replacement in time. As it is a digital PSU, we were beginning to use it for PCIe power readings to augment system measurements, but for now we will have to stick power draw at the wall. For the time being, we've swapped it out with another high-quality and high-wattage PSU.

| CPU: | Intel Core i7-7820X @ 4.3GHz |

| Motherboard: | Gigabyte X299 AORUS Gaming 7 (F9g) |

| Power Supply: | EVGA 1000 G3 |

| Hard Disk: | OCZ Toshiba RD400 (1TB) |

| Memory: | G.Skill TridentZ DDR4-3200 4 x 8GB (16-18-18-38) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | LG 27UD68P-B |

| Video Cards: | AMD Radeon RX Vega 64 (Air Cooled) NVIDIA GeForce RTX 2080 Ti Founders Edition NVIDIA GeForce RTX 2080 Founders Edition NVIDIA GeForce GTX 1080 Ti Founders Edition NVIDIA GeForce GTX 1080 Founders Edition NVIDIA GeForce GTX 980 Ti NVIDIA GeForce GTX 980 |

| Video Drivers: | NVIDIA Release 411.51 Press AMD Radeon Software Adrenalin Edition 18.9.1 |

| OS: | Windows 10 Pro (April 2018 Update) |

| Spectre/Meltdown Mitigations | Yes, both |

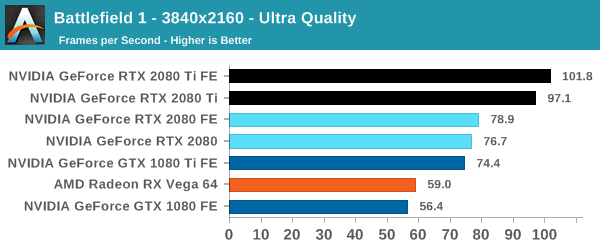

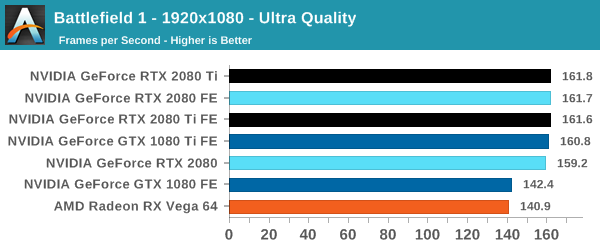

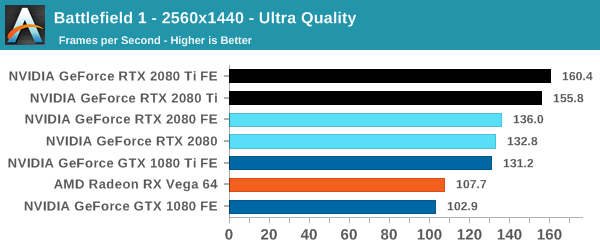

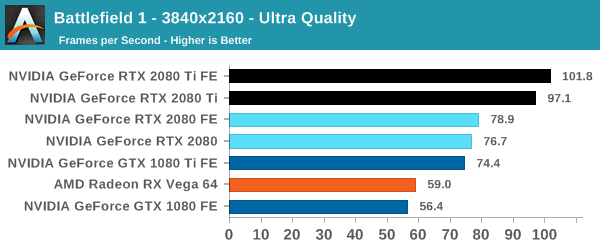

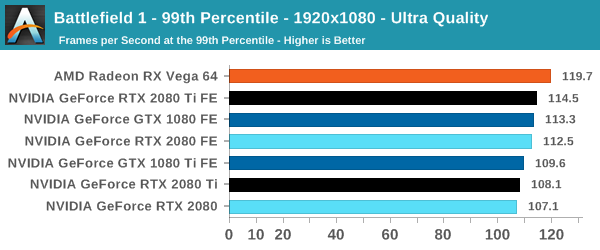

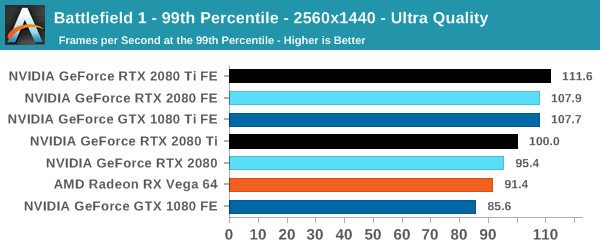

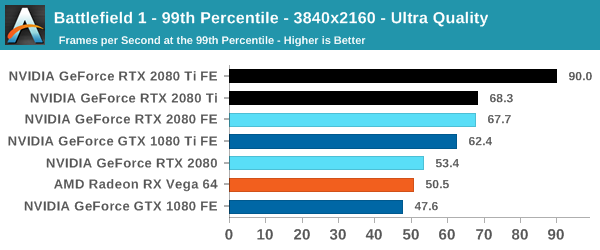

Battlefield 1 (DX11)

Battlefield 1 returns from the 2017 benchmark suite, the 2017 benchmark suite with a bang as DICE brought gamers the long-awaited AAA World War 1 shooter a little over a year ago. With detailed maps, environmental effects, and pacy combat, Battlefield 1 provides a generally well-optimized yet demanding graphics workload. The next Battlefield game from DICE, Battlefield V, completes the nostalgia circuit with a return to World War 2, but more importantly for us, is one of the flagship titles for GeForce RTX real time ray tracing, although at this time it isn't ready.

We use the Ultra preset is used with no alterations. As these benchmarks are from single player mode, our rule of thumb with multiplayer performance still applies: multiplayer framerates generally dip to half our single player framerates. Battlefield 1 also supports HDR (HDR10, Dolby Vision).

| Battlefield 1 | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

| 99th Percentile |  |

|

|

At this point, the RTX 2080 Ti is fast enough to touch the CPU bottleneck at 1080p, but it keeps its substantial lead at 4K. Nowadays, Battlefield 1 runs rather well on a gamut of cards and settings, and in optimized high-profile games like these, the 2080 in particular will need to make sure that the veteran 1080 Ti doesn't edge too close. So we see the Founders Edition specs are enough to firmly plant the 2080 Founders Edition faster than the 1080 Ti Founders Edition.

The outlying low 99th percentile reading for the 2080 Ti occurred on repeated testing, and we're looking into it further.

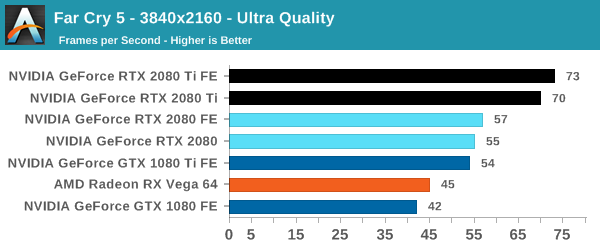

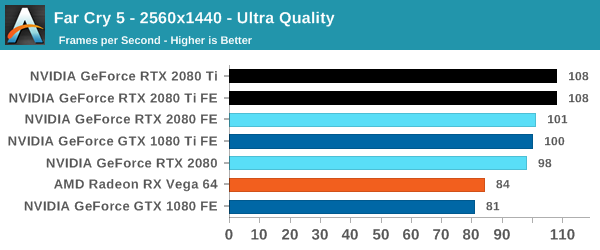

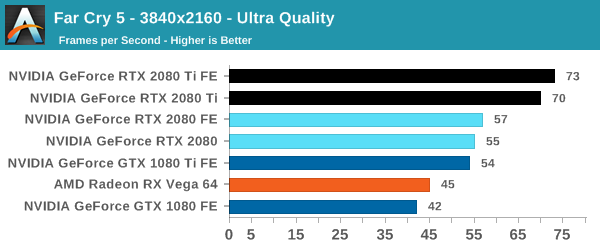

Far Cry 5 (DX11)

The latest title in Ubisoft's Far Cry series lands us right into the unwelcoming arms of an armed militant cult in Montana, one of the many middles-of-nowhere in the United States. With a charismatic and enigmatic adversary, gorgeous landscapes of the northwestern American flavor, and lots of violence, it is classic Far Cry fare. Graphically intensive in an open-world environment, the game mixes in action and exploration.

Far Cry 5 does support Vega-centric features with Rapid Packed Math and Shader Intrinsics. Far Cry 5 also supports HDR (HDR10, scRGB, and FreeSync 2).

| Far Cry 5 | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

Both 20 series cards hit the high-quality playability metric of ~60fps or higher, though it's really the 2080 Ti that pulls away and offers beyond previous generation performance. The difference between the GeForce Turings is again very similar to Battlefield 1 with gains in the 30% range, which is meaningful but not leaps and bounds.

Regardless, 1080 Ti tier and higher performance quickly begins to be bottlenecked by the CPU at 1080p.

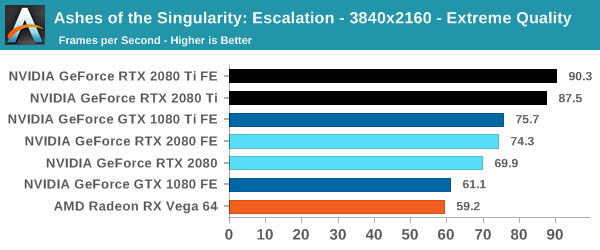

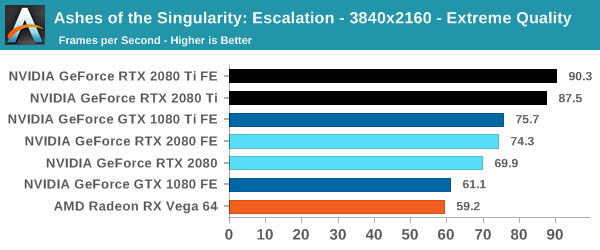

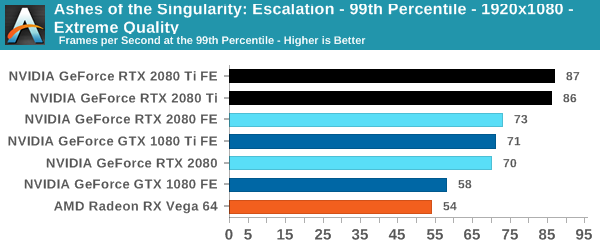

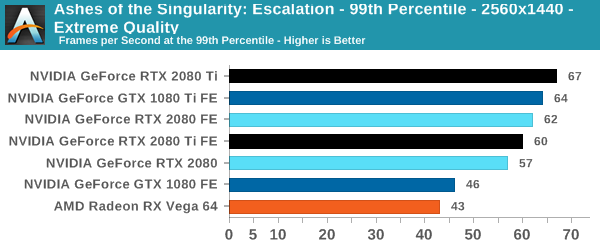

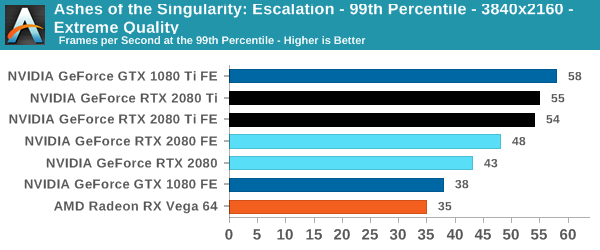

Ashes of the Singularity: Escalation (DX12)

A veteran from both our 2016 and 2017 game lists, Ashes of the Singularity: Escalation remains the DirectX 12 trailblazer, with developer Oxide Games tailoring and designing the Nitrous Engine around such low-level APIs. The game makes the most of DX12's key features, from asynchronous compute to multi-threaded work submission and high batch counts. And with full Vulkan support, Ashes provides a good common ground between the forward-looking APIs of today. Its built-in benchmark tool is still one of the most versatile ways of measuring in-game workloads in terms of output data, automation, and analysis; by offering such a tool publicly and as part-and-parcel of the game, it's an example that other developers should take note of.

Settings and methodology remain identical from its usage in the 2016 GPU suite. To note, we are utilizing the original Ashes Extreme graphical preset, which compares to the current one with MSAA dialed down from x4 to x2, as well as adjusting Texture Rank (MipsToRemove in settings.ini).

| Ashes | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

| 99th Percentile |  |

|

|

For Ashes, the 20 series fare a little worse in their gains over the 10 series, with an advantage at 4K around 14 to 22%. Here, the Founders Edition power and clock tweaks are essential in avoiding the 2080 FE outright losing to the 1080 Ti, though our results are putting the Founders Editions essentially neck-and-neck.

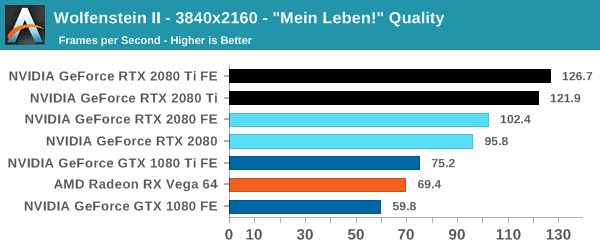

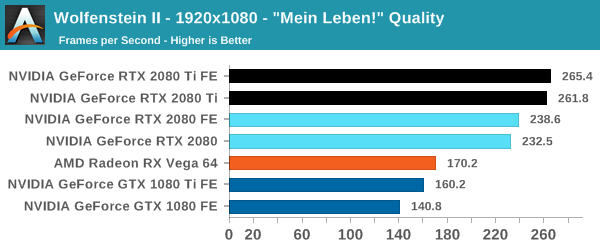

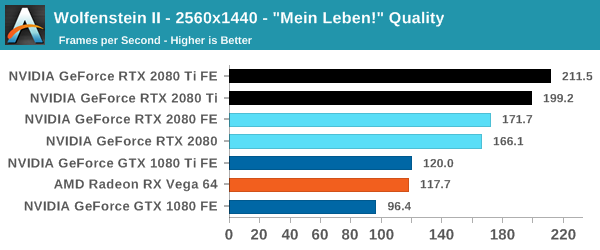

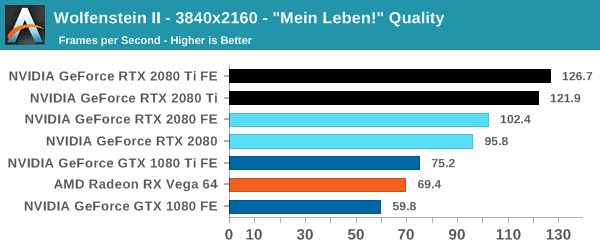

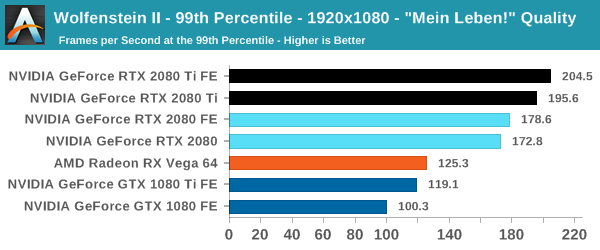

Wolfenstein II: The New Colossus (Vulkan)

id Software is popularly known for a few games involving shooting stuff until it dies, just with different 'stuff' for each one: Nazis, demons, or other players while scorning the laws of physics. Wolfenstein II is the latest of the first, the sequel of a modern reboot series developed by MachineGames and built on id Tech 6. While the tone is significantly less pulpy nowadays, the game is still a frenetic FPS at heart, succeeding DOOM as a modern Vulkan flagship title and arriving as a pure Vullkan implementation rather than the originally OpenGL DOOM.

Featuring a Nazi-occupied America of 1961, Wolfenstein II is lushly designed yet not oppressively intensive on the hardware, something that goes well with its pace of action that emerge suddenly from a level design flush with alternate historical details.

The highest quality preset, "Mein leben!", was used. Wolfenstein II also features Vega-centric GPU Culling and Rapid Packed Math, as well as Radeon-centric Deferred Rendering; in accordance with the preset, neither GPU Culling nor Deferred Rendering was enabled.

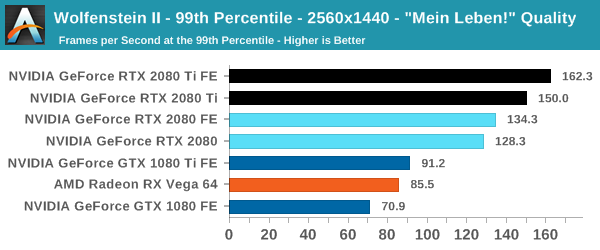

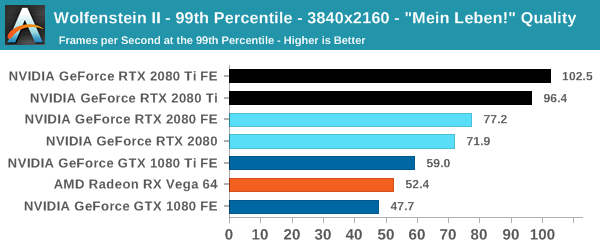

| Wolfenstein II | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

| 99th Percentile |  |

|

|

I am actually impressed with Wolfenstein II and its Vulkan implementation more than the absurd 250+ framerates, if only because many other games hold back the GPU because of the occurring CPU bottleneck. In DOOM, there was a hard 200fps cap because of engine/implementation limitations, a bit of a corner case, but manufacturers make 240Hz monitors nowadays, too. On a GPU performance profiling side, of course, reducing the CPU bottleneck makes comparing powerful GPUs much easier at 1080p, and with a better signal-to-noise than at 4K.

This is combined with the fact that at 4K, the 20 series are looking a huge 60 to 68% lead over the 10 series, and we'll be cross-referencing these performance deltas with other sections of the game. Even in the case of a 'flat-track bully' scenario where the 2080 Ti is running up the score, the 2080 Ti's speed compared to the 2080 is somewhat less than expected at 24 to 27%. It's a somewhat intriguing result for an optimized Vulkan game, as the game runs and scales generally well across the board; It's also not unnoticed that both the RX Vega cards and GeForce Turing cards outperform their expected positions, though without the graphics workload details it's hard to speculate with substance. With framerates like these, the 4K HDR dream at 144 Hz is a real possibility, and it would be interesting to compare with Titan V and Titan Xp results.

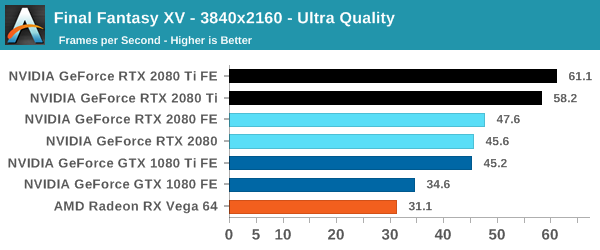

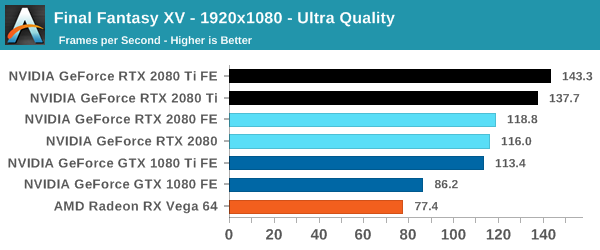

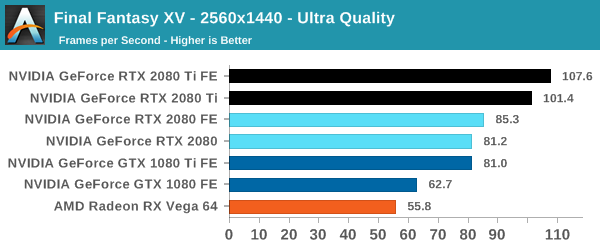

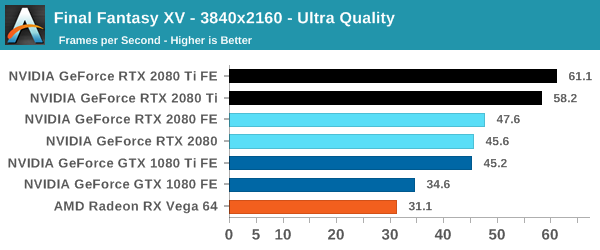

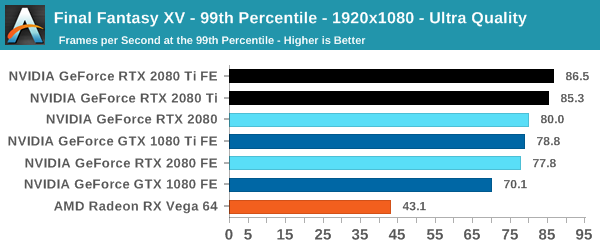

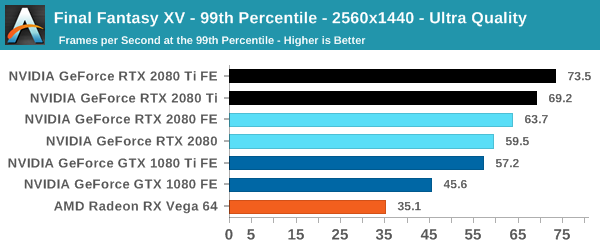

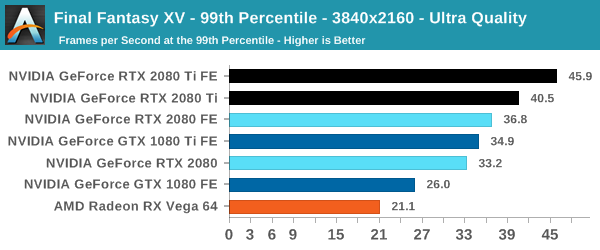

Final Fantasy XV (DX11)

Upon arriving to PC earlier this, Final Fantasy XV: Windows Edition was given a graphical overhaul as it was ported over from console, fruits of their successful partnership with NVIDIA, with hardly any hint of the troubles during Final Fantasy XV's original production and development.

In preparation for the launch, Square Enix opted to release a standalone benchmark that they have since updated. Using the Final Fantasy XV standalone benchmark gives us a lengthy standardized sequence to utilize OCAT. Upon release, the standalone benchmark received criticism for performance issues and general bugginess, as well as confusing graphical presets and performance measurement by 'score'. In its original iteration, the graphical settings could not be adjusted, leaving the user to the presets that were tied to resolution and hidden settings such as GameWorks features.

Since then, Square Enix has patched the benchmark with custom graphics settings and bugfixes to be much more accurate in profiling in-game performance and graphical options, though leaving the 'score' measurement. For our testing, we enable or adjust settings to the highest except for NVIDIA-specific features and 'Model LOD', the latter of which is left at standard. Final Fantasy XV also supports HDR, and it will support DLSS at some date.

| Final Fantasy XV | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

| 99th Percentile |  |

|

|

NVIDIA, of course, is working closely with Square Enix, and the game is naturally expected to run well on NVIDIA cards in general, but the 1080 Ti truly lives up to its gaming flagship reputation in matching the RTX 2080. With Final Fantasy XV, the Founders Edition power and clocks again prove highly useful in the 2080 FE pipping the 1080 Ti, while the 2080 Ti FE makes it across the psychological 60fps mark at 4K.

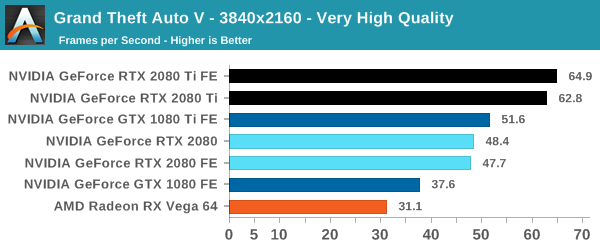

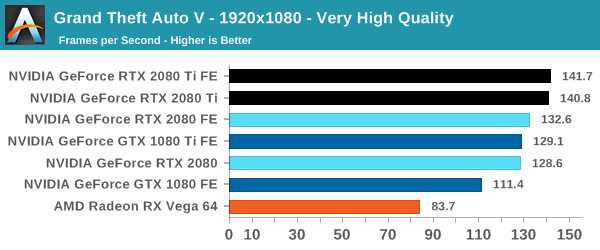

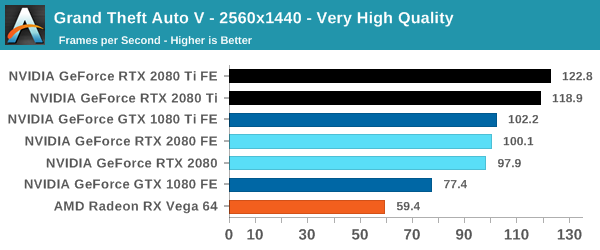

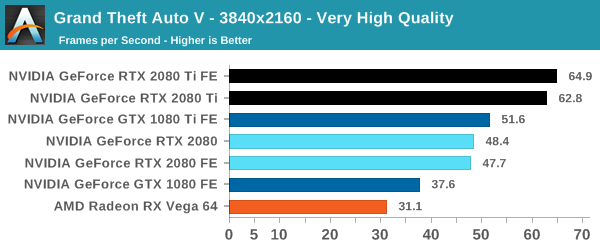

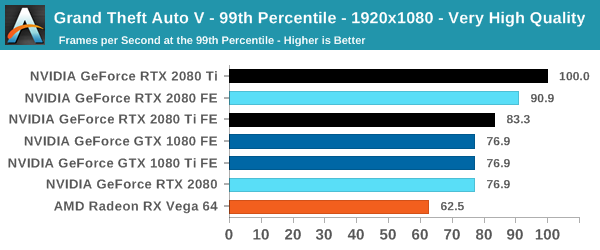

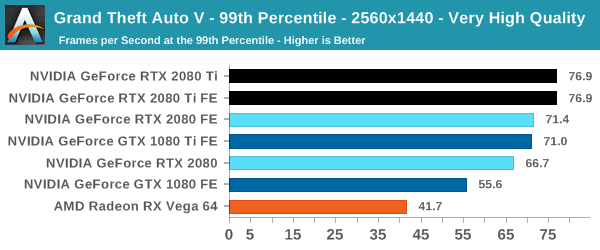

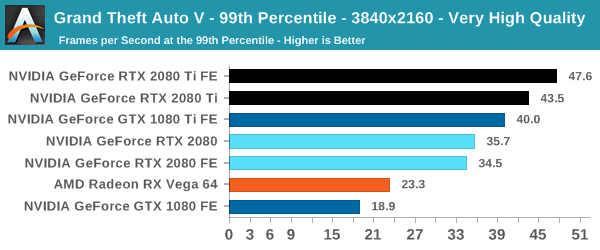

Grand Theft Auto V (DX11)

Now a truly venerable title, GTA V is a veteran of past game suites that is still graphically demanding as they come. As an older DX11 title, it provides a glimpse into the graphically intensive games of yesteryear that don't incorporate the latest features. Originally released for consoles in 2013, the PC port came with a slew of graphical enhancements and options. Just as importantly, GTA V includes a rather intensive and informative built-in benchmark, somewhat uncommon in open-world games.

The settings are identical to its previous appearances, which are custom as GTA V does not have presets. To recap, a "Very High" quality is used, where all primary graphics settings turned up to their highest setting, except grass, which is at its own very high setting. Meanwhile 4x MSAA is enabled for direct views and reflections. This setting also involves turning on some of the advanced rendering features - the game's long shadows, high resolution shadows, and high definition flight streaming - but not increasing the view distance any further.

| GTA V | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

| 99th Percentile |  |

|

|

There was an interesting issue during testing that affected the RTX cards at 4K; running the benchmark would result in a blank screen for the entirety of the run. The image would appear with Alt+Enter to put it in windowed mode, but disappear again back in fullscreen. An external overlay resolved the issue, but performance results were identical either way. We really didn't have time to investigate thoroughly, but GTA V, especially with Social Club, can be quite finicky and I hesitate to call it a driver bug without digging into it more.

It's a testament to both GTA V and the nature of graphics optimization work that a GeForce card can only now average 60fps. Even still, it's restricted to the RTX 2080 Ti performance tier, which is roughly where the Titan V stands as well. Regardless, the results represent the performance scenario that NVIDIA is ultimately hoping to avoid: the 1080 Ti exceeding the 2080 in performance even with the Founders Edition tweaks. At this point, the 1080 Ti is a mature card and the offerings will skew towards tried-and-true halo custom cards, factory overclocked and well-cooled. Plain performance regression in reference settings is not something the RTX 2080 can easily afford with the higher price - Founders Edition or otherwise.

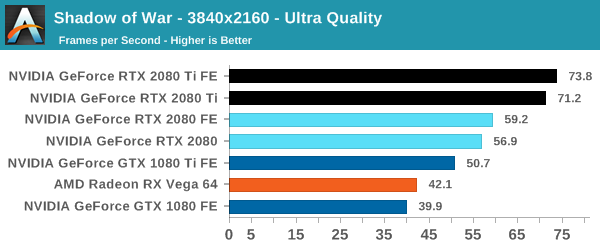

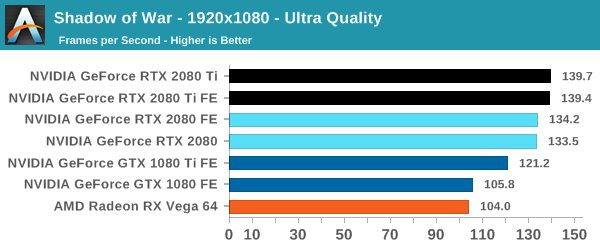

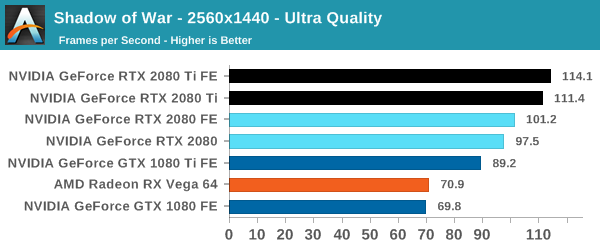

Middle-earth: Shadow of War (DX11)

Next up is Middle-earth: Shadow of War, the sequel to Shadow of Mordor. Developed by Monolith, whose last hit was arguably F.E.A.R., Shadow of Mordor returned them to the spotlight with an innovative NPC rival generation and interaction system called the Nemesis System, along with a storyline based on J.R.R. Tolkien's legendarium, and making it work on a highly modified engine that originally powered F.E.A.R. in 2005.

Using the new LithTech Firebird engine, Shadow of War improves on the detail and complexity, and with free add-on high resolution texture packs, offers itself as a good example of getting the most graphics out of a non state-of-the-art engine. Shadow of War also supports HDR (HDR10).

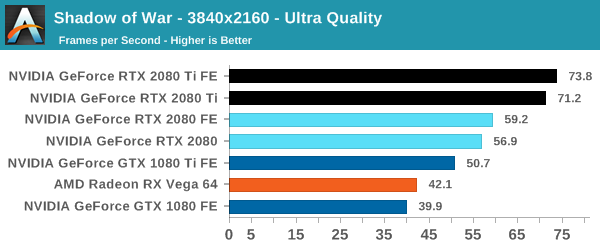

| Shadow of War | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

Shadow of War is one of the more favorable games in terms of the 20 series' performance gains over Pascal, and overall the RTX 2080 is neatly faster than the 1080 Ti, though with a 12 to 17% edge at 4K, not a huge one. The 2080 Ti is comfortably beyond that, though actually its advantage over the 2080 is on the lower end compared to other games with a roughly 25% uplift at 4K.

Regardless, at 1080p we start to see the flagships reach the CPU bottleneck.

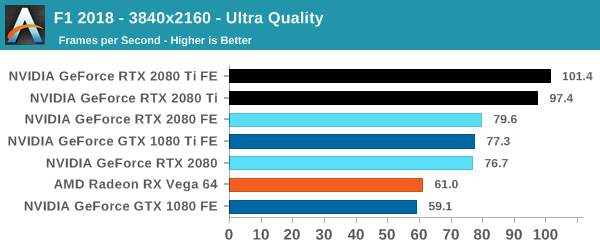

F1 2018 (DX11)

Succeeding F1 2016 is F1 2018, Codemaster's latest iteration in their official Formula One racing games. It features a slimmed down version of Codemasters' traditional built-in benchmarking tools and scripts, something that is surprisingly absent in DiRT 4.

Aside from keeping up-to-date on the Formula One world, F1 2017 added HDR support, which F1 2018 has maintained; otherwise, we should see any newer versions of Codemasters' EGO engine find its way into F1. Graphically demanding in its own right, F1 2018 keeps a useful racing-type graphics workload in our benchmarks.

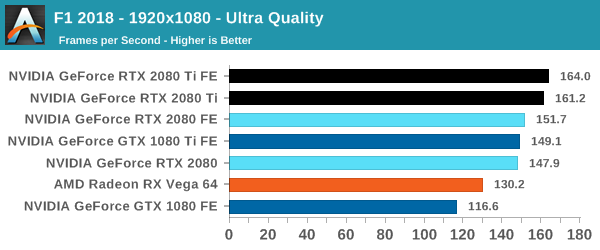

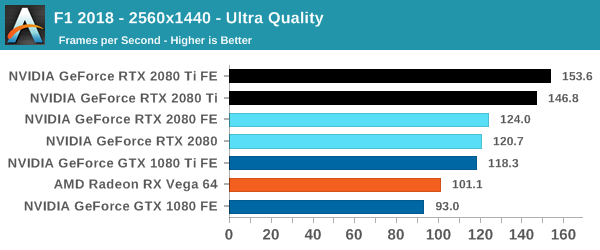

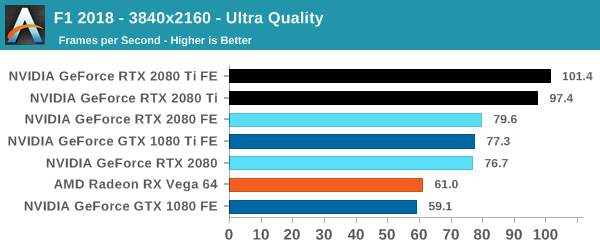

| F1 2018 | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

Surprisingly, F1 2018 runs into the similar situation of the 1080 Ti performing too close for comfort in regards to the 2080. The CPU load at lower resolutions grants a slight reprieve, but in any case the F1 series - and by extension, F1 2018 - are somewhat of a known quantity, especially as a generally polished game and HDR supporting title.

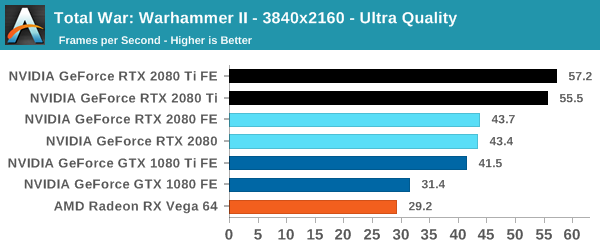

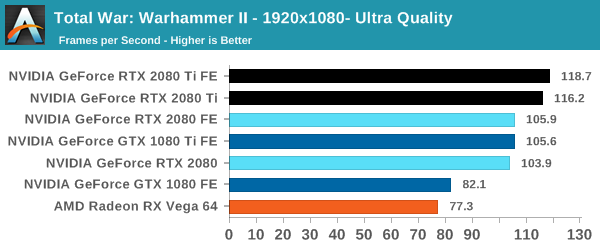

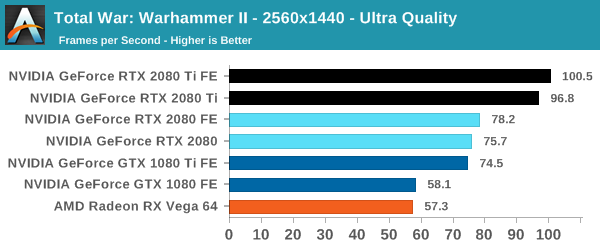

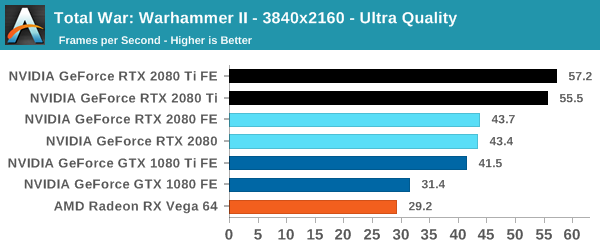

Total War: Warhammer II (DX11)

Last in our 2018 game suite is Total War: Warhammer II, built on the same engine of Total War: Warhammer. While there is a more recent Total War title, Total War Saga: Thrones of Britannia, that game was built on the 32-bit version of the engine. The first TW: Warhammer was a DX11 game was to some extent developed with DX12 in mind, with preview builds showcasing DX12 performance. In Warhammer II, the matter, however, appears to have been dropped, with DX12 mode still marked as beta, but also featuring performance regression for both vendors.

It's unfortunate because Creative Assembly themselves have acknowledged the CPU-bound nature of their games, and with re-use of game engines as spin-offs, DX12 optimization would have continued to provide benefits, especially if the future of graphics in RTS-type games will lean towards low-level APIs.

There are now three benchmarks with varying graphics and processor loads; we've opted for the Battle benchmark, which appears to be the most graphics-bound.

| Total War Warhammer II |

1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

At 1080p, the cards quickly run into the CPU bottleneck, which is to be expected with top-tier video cards and the CPU intensive nature of RTS'es. The Founders Edition power and clock tweaks prove less useful here at 4K, but the models are otherwise in keeping with the expected 1-2-3 linup of 2080 Ti, 2080, and 1080 Ti, with the latter two roughly on par and the 2080 Ti pushing further.

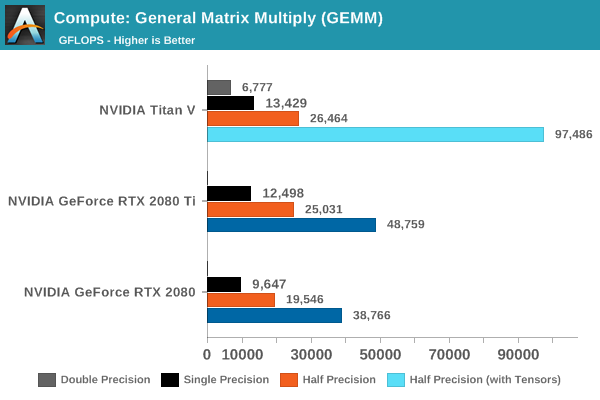

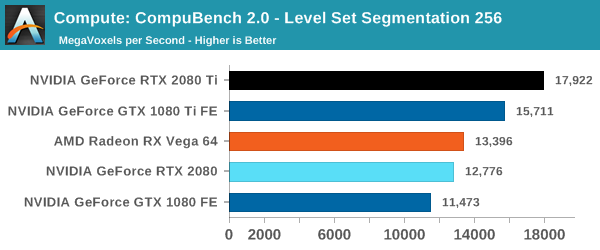

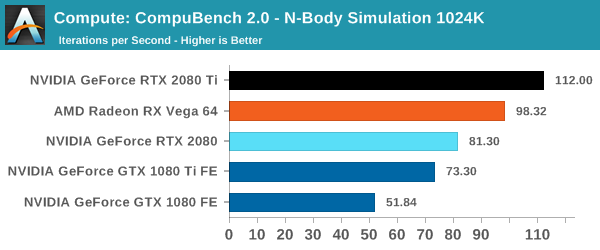

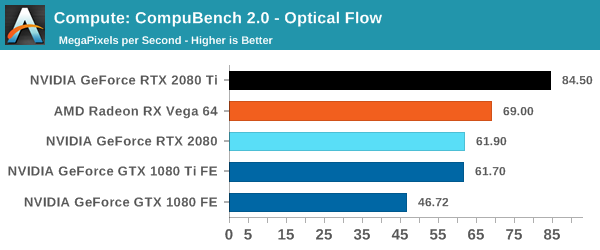

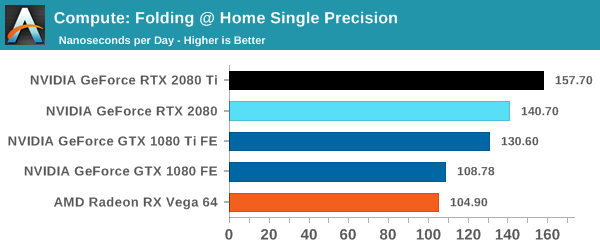

Compute & Synthetics

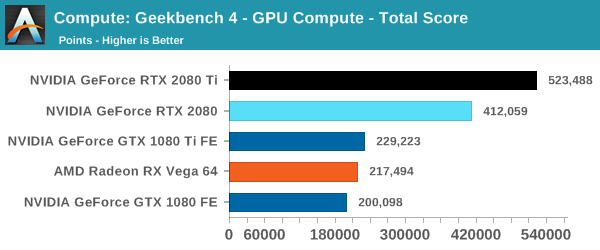

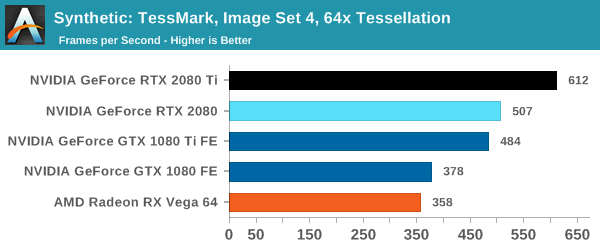

Moving on to the low-level compute guts of the cards, we take a look at compute and synthetic results starting with tensor core accelerated GEMM.

While using binaries compiled for Volta, Turing is backwards compatible in that respect as it is in the same compute capability family (sm_75 compared to Volta's sm_70). In terms of compute resources, the RTX 2080 Ti's 544 tensor cores and 1545MHz boost clock is not far off of the Titan V's 640 tensor cores and 1455MHz boost clock, so the latest Turing-optimized binaries should better reflect the RTX 2080 Ti's raw GEMM acceleration capabilities. Likewise for the 368 tensor core RTX 2080, whose tensor-accelerated HGEMM performance in TFLOPS is somewhere around 20% less than the RTX 2080 Ti.

.

Power, Temperature, and Noise

With a large chip, more transistors, and more frames, questions always pivot to the efficiency of the card, and how well it sits with the overall power consumption, thermal limits of the default ‘coolers’, and the local noise of the fans when at load. Users buying these cards are going to be expected to push some pixels, which will have knock on effects inside a case. For our testing, we use a case for the best real-world results in these metrics.

Power

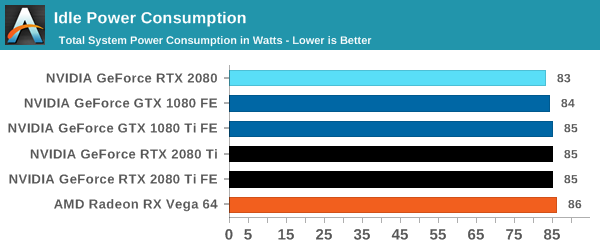

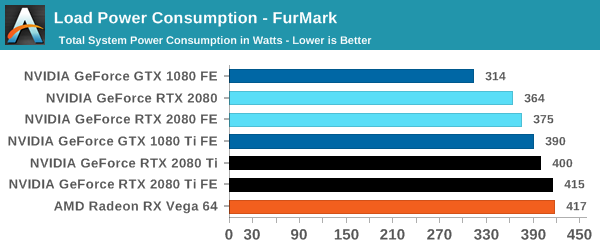

All of our graphics cards pivot around the 83-86W level when idle, though it is noticeable that they are in sets: the 2080 is below the 1080, the 2080 Ti sits above the 1080 Ti, and the Vega 64 consumes the most.

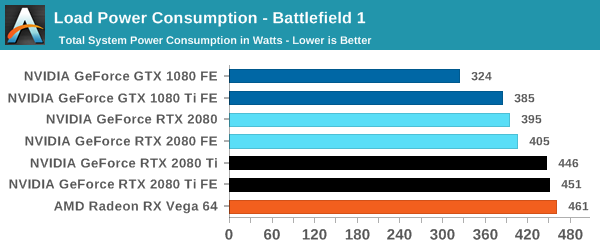

When we crank up a real-world title, all the RTX 20-series cards are pushing more power. The 2080 consumes 10W over the previous generation flagship, the 1080 Ti, and the new 2080 Ti flagship goes for another 50W system power beyond this. Still not as much as the Vega 64, however.

For a synthetic like Furmark, the RTX 2080 results show that it consumes less than the GTX 1080 Ti, although the GTX 1080 is some 50W less. The margin between the RTX 2080 FE and RTX 2080 Ti FE is some 40W, which is indicative of the official TDP differences. At the top end, the RTX 2080 Ti FE and RX Vega 64 are consuming equal power, however the RTX 2080 Ti FE is pushing through more work.

For power, the overall differences are quite clear: the RTX 2080 Ti is a step up above the RTX 2080, however the RTX 2080 shows that it is similar to the previous generation 1080/1080 Ti.

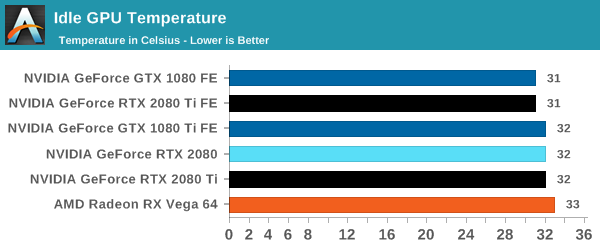

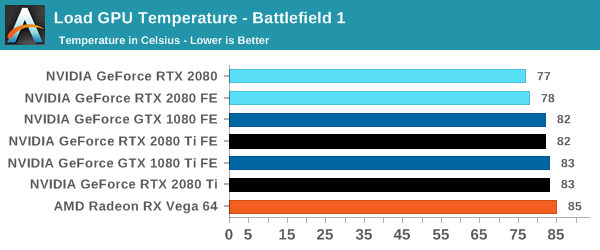

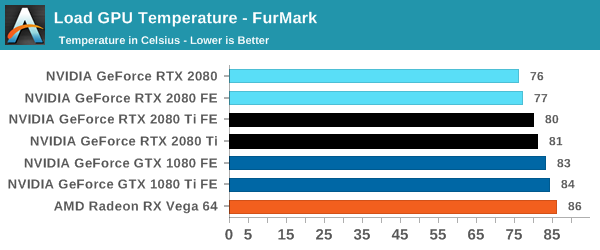

Temperature

Straight off the bat, moving from the blower cooler to the dual fan coolers, we see that the RTX 2080 holds its temperature a lot better than the previous generation GTX 1080 and GTX 1080 Ti.

At each circumstance at load, the RTX 2080 is several degrees cooler than both the previous generation and the RTX 2080 Ti. The 2080 Ti fairs well in Furmark, coming in at a lower temperature than the 10-series, but trades blows in Battlefield. This is a win for the dual fan cooler, rather than the blower.

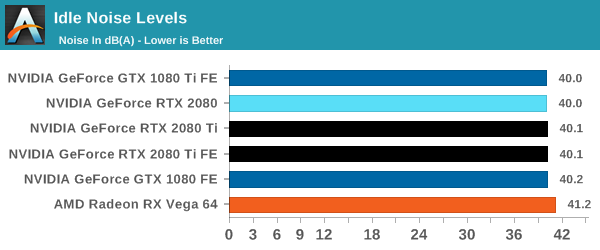

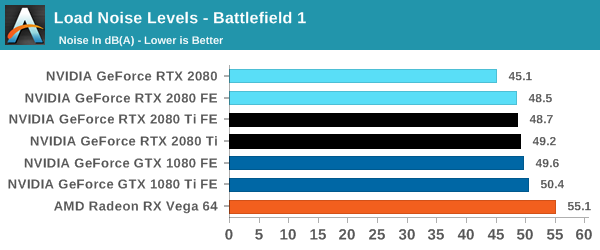

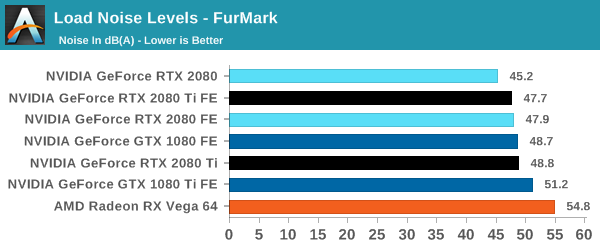

Noise

Similar to the temperature, the noise profile of the two larger fans rather than a single blower means that the new RTX cards can be quieter than the previous generation: the RTX 2080 wins here, showing that it can be 3-5 dB(A) lower than the 10-series and perform similar. The added power needed for the RTX 2080 Ti means that it is still competing against the GTX 1080, but it always beats the GTX 1080 Ti by comparison.

Final Words

Bringing this review to a close, we've seen it all and yet we have more to see. Here's what we know right now. NVIDIA has once again aimed for the top and reached it, securing the performance crown for another presumably long stint. Or arguably extending the current reign, but either way, on terms of traditional performance the new GeForce RTX 20 series further extends NVIDIA's performance lead.

By the numbers, then, in out-of-the-box game performance the reference RTX 2080 Ti is around 32% faster than the GTX 1080 Ti at 4K gaming. With Founders Edition specifications (a 10W higher TDP and 90MHz boost clock increase) the lead grows to 37%, which doesn't fundamentally change the matchup but isn't a meaningless increase.

Moving on to the RTX 2080, what we see in our numbers is a 35% performance improvement over the GTX 1080 at 4K, moving up to 40% with Founders Edition specifications. In absolute terms, this actually puts it on very similar footing to the GTX 1080 Ti, with the RTX 2080 pulling ahead, but only by 8% or so. So the two cards aren't equals in performance, but by video card standrads they're incredibly close, especially as that level of difference is where factory overclocked cards can equal their silicon superiors. It's also around the level where we expect that cards might 'trade blows', and in fact this does happen in Ashes of the Singularity and GTA V. As a point of comparison, we saw the GTX 1080 Ti at launch come in around 32% faster than the GTX 1080 at 4K.

Meaning that, in other words, the RTX 2080 has GTX 1080 Ti tier conventional performance, mildly faster by single % in our games at 4K. Naturally, under workloads that take advantage of RT Cores or Tensor Cores, the lead would increase, though right now there’s no way of translating that into a robust real world measurement.

So generationally-speaking, the GeForce RTX 2080 represents a much smaller performance gain than the GTX 1080's 71% performance uplift over the GTX 980. In fact, it's in area of about half that, with the RTX 2080 Founders Edition bringing 40% more performance and reference with 35% more performance over the GTX 1080. Looking further back, the GTX 980's uplift over previous generations can be divvied up in a few ways, but compared to the GTX 680 it brought a similar 75% gain.

But the performance hasn't come for free in terms of energy efficiency, which was one of Maxwell's hallmark strengths. TDPs have been increased across the x80 Ti/x80/x70 board, and the consequence is greater power consumption. The RTX 2080 features power draw at the wall slightly more than the GTX 1080 Ti's draw, while the RTX 2080 Ti's system consumption leaps by more than 60W to reach near-Vega 64 power draw at the wall.

Putting aside those who will always purchase the most performant card on the market, regardless of value proposition, most gamers will want to know: "Is it worth the price?" Unfortunately, we don't have enough information to really say - and neither does anyone else, except NVIDIA and their partner developers. This is because the RT Cores, tensor cores, Turing shader features, and the supporting software are all built into the price. But NVIDIA's key features - such as real time ray tracing and DLSS - aren't being utilized by any games right at launch. In fact, it's not very clear at all when those games might arrive, because NVIDIA ultimately is reliant on developers here.

Even when they do arrive, we can at least assume that enabling real time ray tracing will incur a performance hit. Based on the hands-on and comparing performance in the demos, which we were not able to analyze and investigate in time for publication, it seems that DLSS plays a huge part in halving the input costs. In the Star Wars Reflections demo, we measured the RTX 2080 Ti Founders Edition managing around a 14.7fps average at 4K and 31.4fps average at 1440p when rendering the real time ray traced scene. With DLSS enabled, it jumps to 33.8 and 57.2fps.

So where does that leave things? For traditional performance, both RTX cards line up with current NVIDIA offerings, giving a straightforward point-of-reference for gamers. The observed performance delta between the RTX 2080 Founders Edition and GTX 1080 Ti Founders Edition is at a level achievable by the Titan Xp or overclocked custom GTX 1080 Ti’s. Meanwhile, NVIDIA mentioned that the RTX 2080 Ti should be equal to or faster than the Titan V, and while we currently do not have the card on hand to confirm this, the performance difference from when we did review that card is in-line with NVIDIA's statements.

The easier takeaway is that these cards would not be a good buy for GTX 1080 Ti owners, as the RTX 2080 would be a sidegrade and the RTX 2080 Ti would be offering 37% more performance for $1200, a performance difference akin upgrading to a GTX 1080 Ti from a GTX 1080. For prospective buyers in general, it largely depends on how long the GTX 1080 Ti will be on shelves, because as it stands, the RTX 2080 is around $90 more expensive and less likely to be in stock. Looking to the RTX 2080 Ti, diminishing returns start to kick in, where paying 43% or 50% more gets you 27-28% more performance.

The benefits of the new hardware cannot be captured in our standard benchmarks alone. The DXR ecosystem is in its adolescence, if not infancy. Of course, NVIDIA is hardly a passive player in this. The GeForce RTX initiative is a key inflection point in NVIDIA's new push to change and mold computer graphics and gaming, and it's highly unlikely that anything about this launch wasn't completely deliberate. There was a conscious decision to launch the cards now, basically as soon as was practically possible. Even waiting a month might align with a few DXR and DLSS supporting games out at launch, though at the cost of missing the prime holiday window.

Taking a step back, we should highlight NVIDIA's technological achievement here: real time ray tracing in games. Even with all the caveats and potentially significant performance costs, not only was the feat achieved but implemented, and not with proofs-of-concept but with full-fledged AA and AAA games. Today is a milestone from a purely academic view of computer graphics.

But as we alluded to in the Turing architecture deep dive, graphics engineers and developers, and the consumers that purchase the fruits of their labor, are all playing different roles in pursuing the real time ray tracing dream. So NVIDIA needs a strong buy-in from the consumers, while the developers might need much less convincing. Ultimately, gamers can't be blamed for wanting to game with their cards, and on that level they will have to think long and hard about paying extra to buy graphics hardware that is priced extra with features that aren't yet applicable to real-world gaming, and yet only provides performance comparable to previous generation video cards.