Original Link: https://www.anandtech.com/show/11917/nvidia-holodeck-hands-on-early-access

GTC Europe 2017: Hands-On with NVIDIA's Holodeck, Early Access Announced

by Ian Cutress & Nate Oh on October 13, 2017 10:25 AM EST- Posted in

- GPUs

- Trade Shows

- NVIDIA

- VR

- GTC Europe

- AR

- Holodeck

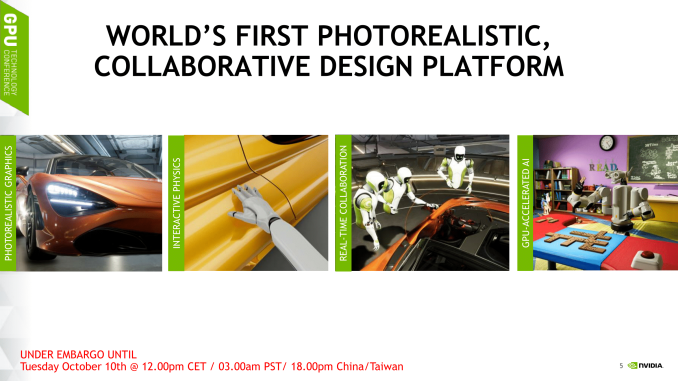

- Collaboration

In addition the Drive PX Pegasus, NVIDIA announced Holodeck Early Access at GTC Europe 2017 in Germany. First announced at the primary GTC 2017 as Project Holodeck with early access slated for September, NVIDIA elaborated on the name change that Holodeck had moved from an exploratory project to a real product. To recap, Holodeck is essentially a photorealistic VR environment for collaborative design and virtual prototyping, where high-resolution 3D models can be brought into a real-time VR space. The idea today is that NVIDIA is soliciting community feedback and input via early access as they continue to develop Holodeck as a product.

As earlier disclosed at GTC 2017, Holodeck is powered by the Unreal Engine. Holodeck is also featured in NVIDIA’s Isaac Lab, a virtual AI training environment. For the online collaboration feature, multiple users currently connect to the same session. Due to using Unreal, sessions will be similar to hosting a video game, whereby one person acts as the host and all other players will require the assets in advance to connect. This may require some fine tuning, especially when some high-resolution models will run into the gigabytes of data and need to be shared with all parties intending to connect in advance.

While Holodeck does feature PhysX, the technology was described as powering primary interactive scene physics, as opposed to solely secondary physics processing. This differs from the previous use of the PhysX terminology, and to that end, NVIDIA confirmed the terminology change.

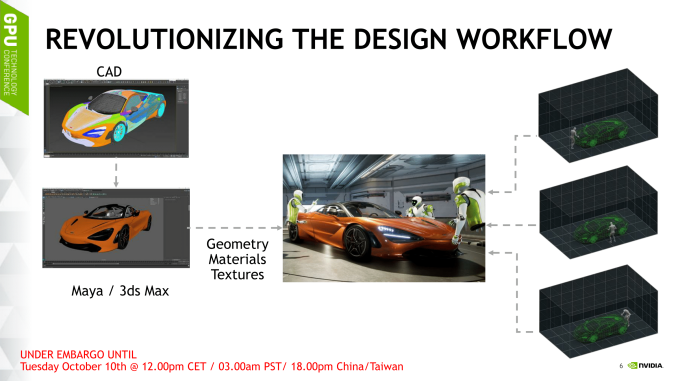

In terms of Holodeck Early Access workflow, a CAD model is brought into Maya or 3ds Max where the materials/geometry/textures are exported to a specific file format, and then imported to Holodeck using a plugin. Other CAD environments will be supported over time due to the use of Holodeck APIs. On that note, NVIDIA commented that Holodeck is intended for users with pre-existing CAD expertise.

Holodeck will be distributed through Steam and will be managed via Steam Keys. As it is a graphically intensive VR experience, Holodeck Early Access will officially support the GeForce GTX 1080 Ti, Titan Xp, or Quadro P6000, in addition to an HMD. Early Access will be limited to a fixed number of passes being issued every week but is intended to be open to all later.

Hands-On Experience: Holodeck

Ian Cutress

So perhaps the first thing I should say when I tested out the Holodeck Demo is that I managed to break it to the point of needing to restart the software. The whole ecosystem is still in an early beta stage, so there are some rough edges to get around, but for the most part it worked.

NVIDIA gave me an HTC Vive headset in a dedicated Holodeck room, and placed me in what could be described as a Google Tilt Brush type of environment. The main difference was that in front of me was a supercar, and we went through the various that Holodeck currently provides in single-user mode.

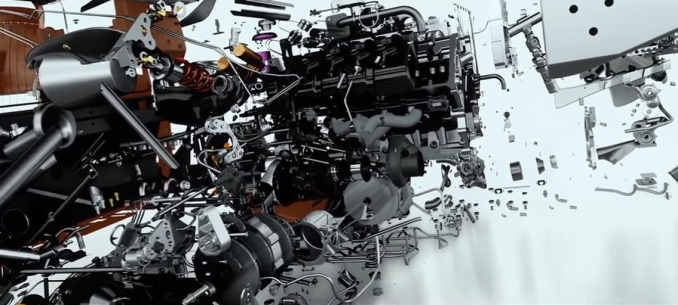

This means walking around the very detailed car, and exploding the car into each one of its 30000 individual pieces.

For the most part these models were detailed, but we were approaching the fine limits of what we can currently do with VR: while 50 million polygons were on screen for all the parts, when I tried to focus on one, such as a screw, the model of the screw only consisted of 100s (or even 10s) of polygons and was not very detailed. My perception would be that I could determine the direction of the screw thread, but not in this case. I could barely make out that it required a Philips screwdriver, due to the lack of detail. Unbeknownst to me at the time, Holodeck can pick up and place parts (with or without physics), so I didn’t try and pick up the parts before de-exploding the vehicle.

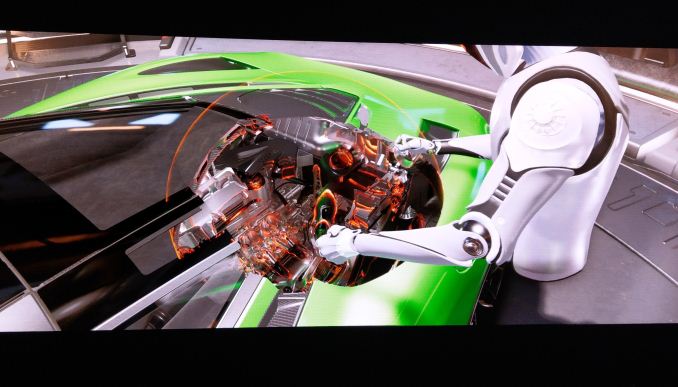

The next feature on display was the clipping tool. This is a bubble that can be used to adjust the clipping of the view and rearrange parts of the z-order buffer to look inside the vehicle.

The bubble can be resized and replaced, with the idea that several people can use their own bubbles in the environment, or pass them around. In this view it was very detailed again, however where two surfaces were near to each other there was some obvious texture clipping going on, causing a flickering between the two. This could be a function related to the engine, as from previous experience game engines sometimes do not handle polygon clipping or texture clipping very well.

At one point we also saw a small minor issue – come parts in some designs are flexible (e.g. hoses), and this caused an issue on the demo as it was put through as a fixed model, meaning that some of the flexible parts did not line up with the rest of the design. Again, it’s still a beta.

Aside from the visual tools, there were also some adjustment tools. Using one of the options, certain objects and surfaces could be selected and adjusted for color, transparency, and material. I somehow changed the wheel rims to be made out of concrete and pink, which also rendered them immune to the bubble clipping tool. Making the bonnet transparent was interesting as well. Along with the adjustment tool was also a laser measurement tool that provided a means to measure point to point distances, much like IR distance laser pointers work in real life.

The simulation also allowed for a pencil tool, to write in the air (like Tilt Brush), as well as a note taking tool that provided a whiteboard to write on in a 2D fashion. It was at this point that I learned that objects could be picked up as well, meaning that I could pick up my 3D writing and place it on/through the car, or pick up the whiteboard and place it elsewhere. I was unable to write on the reverse of the blackboard, interestingly enough.

So then I broke the simulation. I managed to pick up the car. Somehow picking up a supercar easily was somewhat amusing, especially as I was able to use my other hand to use the previous tools and look through the car. I asked about picking up in a multi-user environment, and was told that it is a first come, first serve arrangement with picking up items. I tried to let go of the car, but somehow the simulation kept it linked to my hand. The shadow of the car was still fixed from when it was on the floor, at which point the person giving me the demo stated that one of the issues still to be solved in Holodeck is one of global illumination and point illumination.

Near the end of the demo, I played around with a few other tools, and ended up in the model loading area to be able to pull in new models into the scene. My thoughts went immediately to video game development, allowing developers to exist inside a room of their game and collaborate with the writers into where objects should be placed for the best atmosphere. NVIDIA’s main focus for Holodeck seems to be with the commercial industry first, such as automotive and architecture, although I can see plenty of game development use too.

As an aside, the ability to bring in local models is going to be a tough hill to climb. If developers from different geographical regions have local models they want to import but not everyone has the latest up-to-date versions of the models, it may require gigabytes of data transfer between all the parties before such a model can be imported. This could limit the ability to interact if all the assets are not directly shared the night before, and allowing for synchronization to happen overnight.

Unfortunately, the demo was not of a multi-user experience, but from the snippet of experience I had, it is clear that this is the next stage of VR interactivity and multi-user is going to be a part of it. I was unsure whether to be amazed from the demo, or to actually say ‘well this is what they promised, why was I expecting anything different?’. There are two main things that I think NVIDIA want out of holodeck that were not obvious from the discussions: fully interactive physics (see above for the redefinition of PhysX, for example), and also haptic feedback in a physical environment. From our discussions with NVIDIA, it sounded very clear that this was a third party problem to solve, not NVIDIA’s own. From NVIDIA’s standpoint, it sounds like they will implement an API for haptic feedback, but how that manifests will be someone else’s problem.

*Images for the Hands-On were taken from NVIDIA’s presentations, as I forgot to set up a video camera while taking the demo

Holodeck Early Access

Interested parties may fill out NVIDIA’s Holodeck early access application form. Note that this is separate from the Holodeck news notification form.